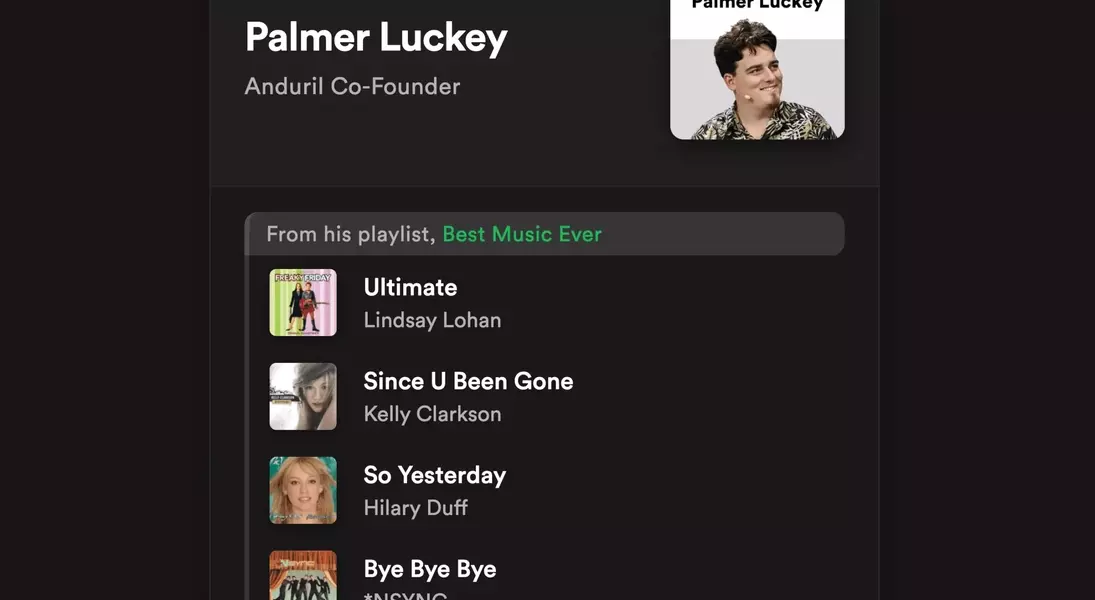

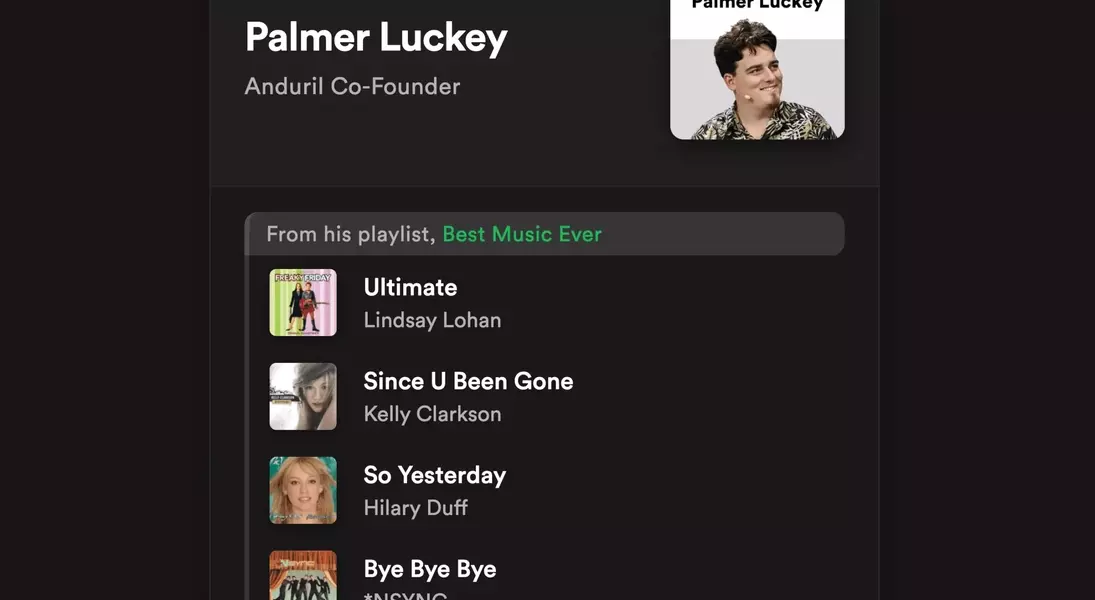

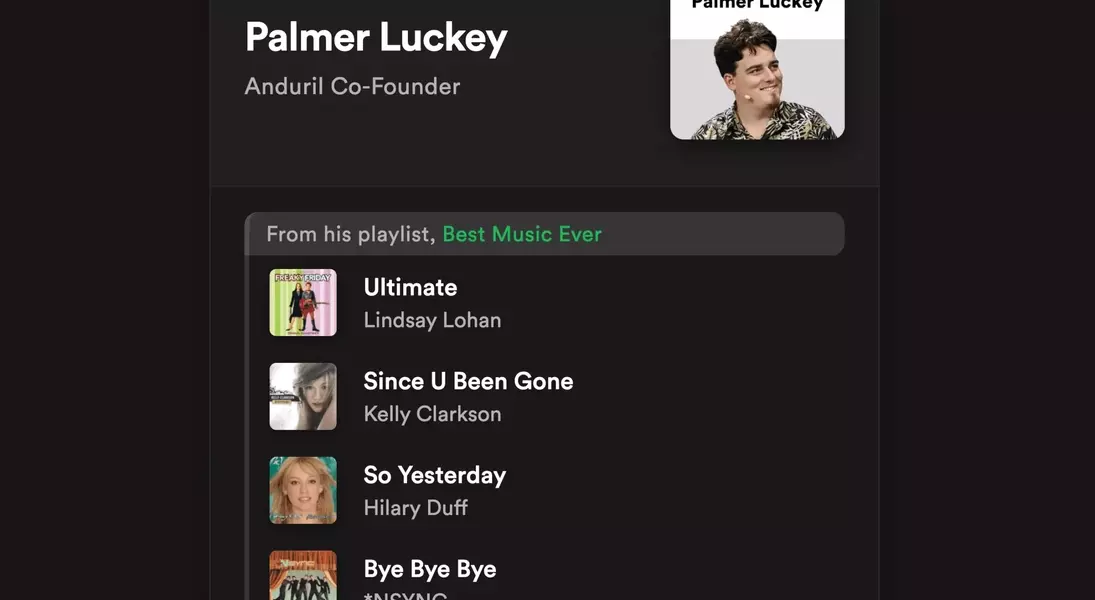

A recent revelation has stirred conversations around digital privacy as a new platform, "The Panama Playlists," has unveiled the music preferences of several high-profile individuals across the technology, media, and political spectrum. This collection, curated by an anonymous entity, showcases a surprising level of accessibility to personal listening data, including tracks favored by figures like OpenAI CEO Sam Altman, Vice President JD Vance, and various journalists. While some individuals confirmed the accuracy of their listed tastes, others, like journalist Kara Swisher, disputed the information, attributing discrepancies to shared accounts or the platform's public defaults. This incident underscores the inherent risks of Spotify's current privacy configurations, which largely favor public sharing over user control.

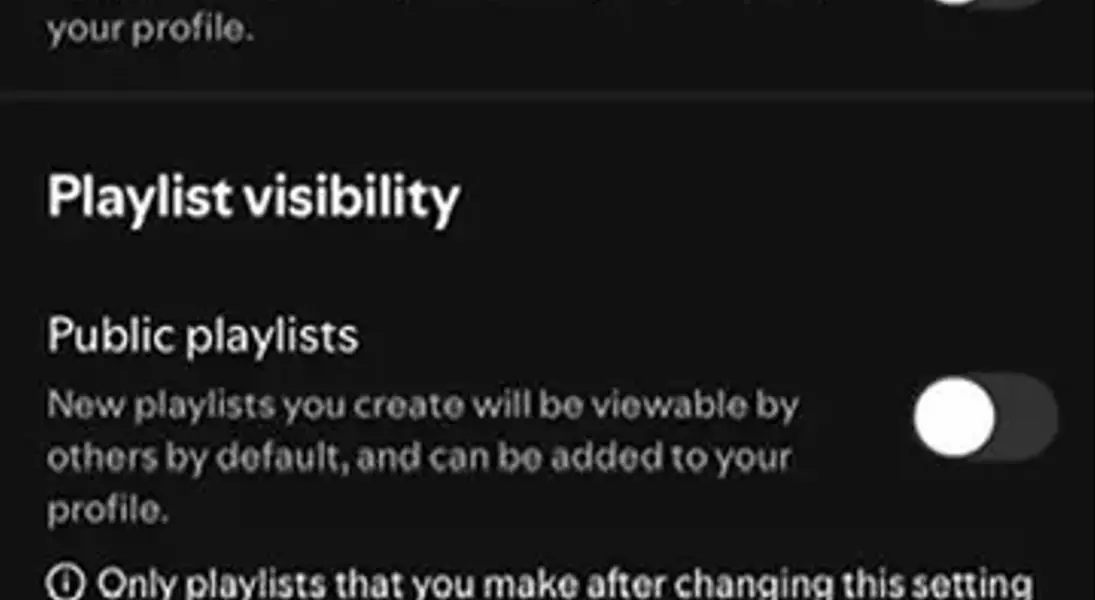

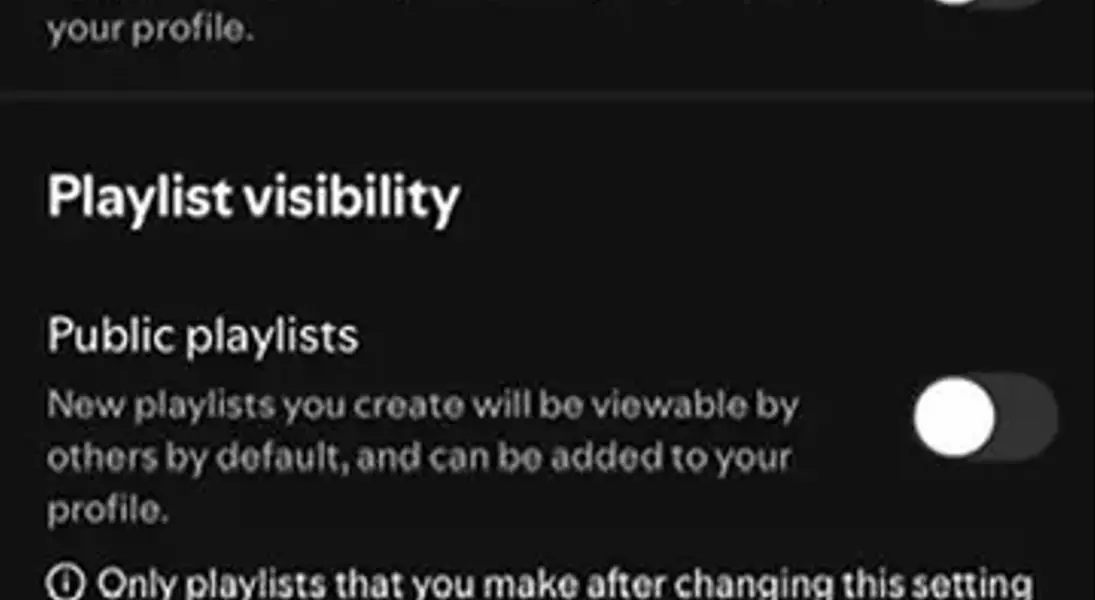

The root of this exposure lies in Spotify's design, which, by default, makes user profiles and playlists publicly visible. To safeguard personal data, users must navigate through complex privacy settings, manually toggling each playlist to private—a cumbersome process that does not apply retroactively. Furthermore, the platform's system of following users, often without explicit notification to the followed party, exacerbates the issue, allowing for passive observation of listening activity. This lax approach to user privacy extends beyond simple music preferences, encompassing a wide array of personal data, from search queries and streaming history to location data and device usage, as outlined in Spotify's privacy policy. The platform's structure prioritizes social interaction and data collection, often at the expense of individual privacy, echoing previous concerns about user data exposure on other prominent digital services like Venmo.

While the disclosure of musical tastes may seem trivial compared to more sensitive data breaches, it serves as a potent reminder of the pervasive nature of digital surveillance and the critical importance of robust privacy safeguards. This event should prompt both platforms and users to re-evaluate their data handling practices and privacy settings. For users, it highlights the necessity of actively managing their digital footprint and understanding the implications of default privacy settings. For companies, it emphasizes the ethical imperative to design platforms with privacy by design, empowering users with intuitive and comprehensive control over their personal information from the outset. Upholding user privacy is not merely a technical challenge but a fundamental responsibility that underpins trust in the digital age.