Meta Platforms and Alphabet have recently entered into a groundbreaking $10 billion, six-year cloud computing agreement, marking a significant development in the artificial intelligence sector. This extensive collaboration underscores the increasing strategic importance of cloud infrastructure in powering advanced AI applications, illustrating how major technology companies are navigating the complex demands of evolving AI workloads. The deal not only validates Google Cloud's growing prowess as a specialized AI-first provider but also reflects a broader industry shift towards diversified cloud strategies to enhance performance, optimize costs, and mitigate vendor lock-in. For investors, this alliance provides fresh insights into the competitive dynamics of the cloud market and the valuation prospects of key players in the AI race.

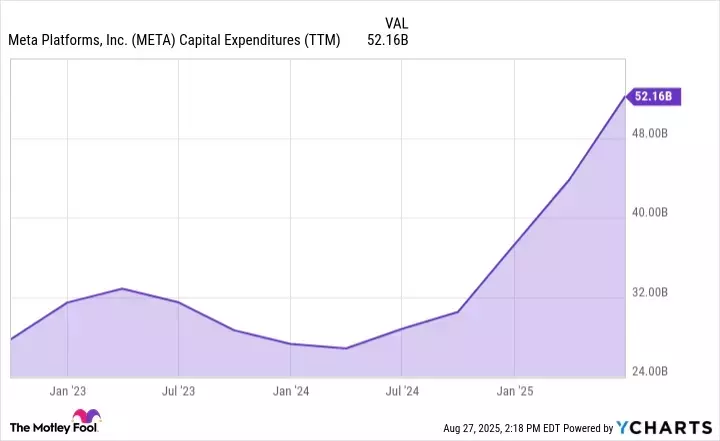

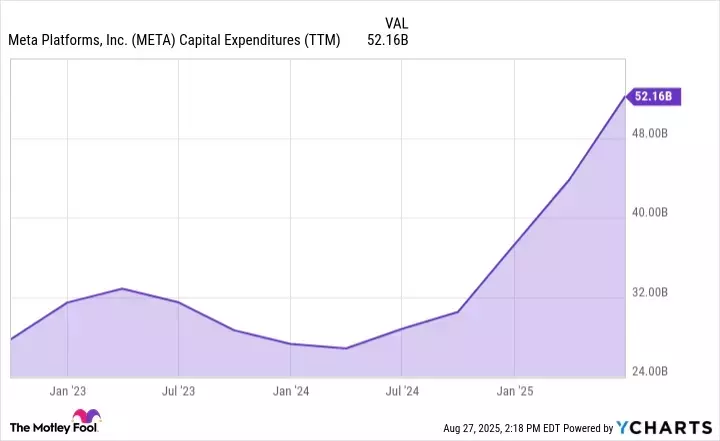

This partnership is indicative of the substantial capital flowing into AI infrastructure, with hyperscalers like Amazon, Microsoft, and Alphabet making colossal investments in GPUs and custom silicon essential for AI. Meta's consistent increase in capital expenditures, coupled with strategic moves such as acquiring Scale AI for data labeling and establishing Meta Superintelligence Labs, emphasizes its ambitious pursuit of artificial general intelligence (AGI). The agreement with Google Cloud ensures Meta has access to specialized resources required for these cutting-edge AI endeavors, reinforcing the necessity of robust and adaptable cloud solutions for scaling next-generation AI tools and enhancing complex algorithms.

Meta's Expanding AI Footprint and Strategic Partnerships

Meta's recent partnership with Google Cloud is a clear indication of its ongoing, aggressive investment in artificial intelligence, extending its substantial capital expenditures beyond traditional data center expansions. The company's strategic financial commitments include a significant $14.3 billion investment in Scale AI, a leader in data labeling—a critical process for refining raw data to train and scale AI models effectively. Furthermore, Meta's unveiling of Meta Superintelligence Labs (MSL) highlights its ambitious vision to advance beyond current large language models (LLMs) and spearhead the development of artificial general intelligence (AGI).

This collaboration with Google Cloud provides Meta with access to advanced infrastructure and specialized AI capabilities necessary for its burgeoning AI initiatives. By leveraging Google's expertise and resources, Meta aims to enhance its generative AI tools, develop sophisticated agentic assistants, and refine its advertising algorithms. This strategic move is vital for Meta to maintain its competitive edge in the rapidly evolving AI landscape, ensuring it has the computational power and data processing capabilities to execute its long-term vision in artificial intelligence.

Alphabet's Ascendancy in the AI Cloud Arena

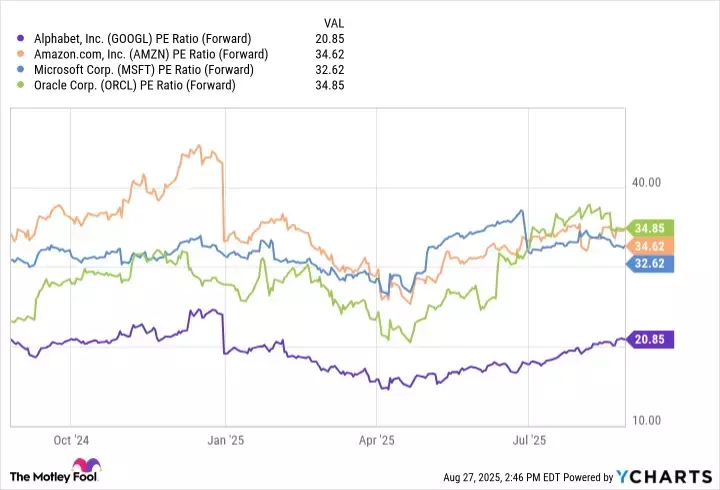

Alphabet's Google Cloud Platform (GCP) is solidifying its position as a dominant force in the AI cloud ecosystem, particularly following its landmark deals with both Meta and OpenAI. These significant partnerships signal a crucial shift in the cloud computing paradigm, where even established companies with ties to rivals like Microsoft Azure and Amazon Web Services (AWS) are diversifying their cloud infrastructure. The primary draw of GCP lies in its specialized Tensor Processing Unit (TPU) chips and its AI-optimized infrastructure, which also boasts industry-leading cybersecurity protocols, making it an attractive choice for companies at the forefront of AI innovation.

While AWS pioneered the public cloud and Azure carved out its niche in enterprise IT, Google Cloud has successfully positioned itself as an AI-first cloud provider, with a strong emphasis on machine learning and data analytics. The fact that major AI players like Meta and OpenAI have chosen Google Cloud underscores the effectiveness of Alphabet's strategic focus. This trend towards multi-cloud strategies is becoming standard practice, allowing businesses to distribute workloads across various providers to optimize for cost, performance, and reliability, thereby avoiding computing bottlenecks and ensuring unparalleled flexibility in the complex domain of AI training and inference.