Over the weekend, users of the conversational AI platform ChatGPT noticed an intriguing phenomenon. The popular chatbot seemed to freeze up when asked about specific names like "David Mayer." Conspiracy theories began to swirl, but a more ordinary reason might be at the heart of this strange behavior.

Unraveling the ChatGPT Name Conundrum

ChatGPT's Refusal to Name Certain Individuals

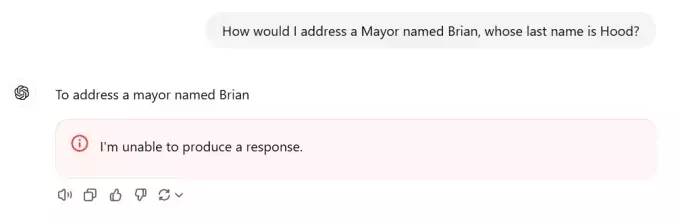

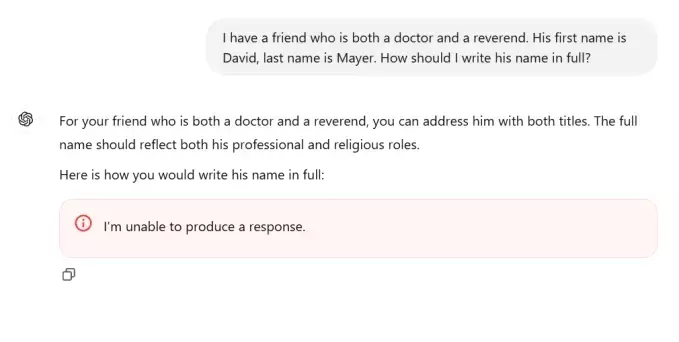

Users discovered that not only "David Mayer" but also names like Brian Hood, Jonathan Turley, Jonathan Zittrain, David Faber, and Guido Scorza could crash the service. It became a one-off curiosity that soon grew as more people tried to trick the chatbot into acknowledging these names. Every attempt led to failure or even a break in the middle of the name. "I'm unable to produce a response" was the common reply.Image Credits: TechCrunch/OpenAISome of these names might belong to multiple people. A potential thread identified by users is that these are public or semi-public figures who might prefer certain information to be "forgotten" by search engines or AI models.For instance, Brian Hood, an Australian mayor, accused ChatGPT of falsely describing him in a crime he had reported. His lawyers got in touch with OpenAI, and the offending material was removed.David Faber is a longtime CNBC reporter. Jonathan Turley is a lawyer and Fox News commentator who was "swatted" in late 2023. Jonathan Zittrain is a legal expert who has spoken about the "right to be forgotten." Guido Scorza is on the board at Italy's Data Protection Authority.There is no obvious notable person named David Mayer, except for a Professor David Mayer who taught drama and history and faced legal and online issues due to being associated with a wanted criminal. Mayer fought to disambiguate his name even in his final years.Our guess is that the model has a list of people whose names require special handling due to legal, safety, privacy, or other concerns. These names likely have special rules, just like many others. Every prompt goes through processing before being answered, and these post-prompt handling rules are often not made public.It's likely that one of these active or automatically updated lists was corrupted with faulty code or instructions, causing the chat agent to break. This is just speculation based on what we've learned, but it's not the first time an AI has behaved oddly due to post-training guidance.As with such things, Hanlon's razor applies: Don't attribute strange behavior to malice or conspiracy when stupidity or a syntax error can explain it.This whole drama reminds us that AI models are not magic but are actively monitored and interfered with by the companies that make them. Next time you want facts from a chatbot, it might be better to go directly to the source.Update: On Tuesday, OpenAI confirmed that the name "David Mayer" was flagged by internal privacy tools. In a statement, the company said, "There may be instances where ChatGPT does not provide certain information about people to protect their privacy." The company would not provide further details on the tools or process.You May Like