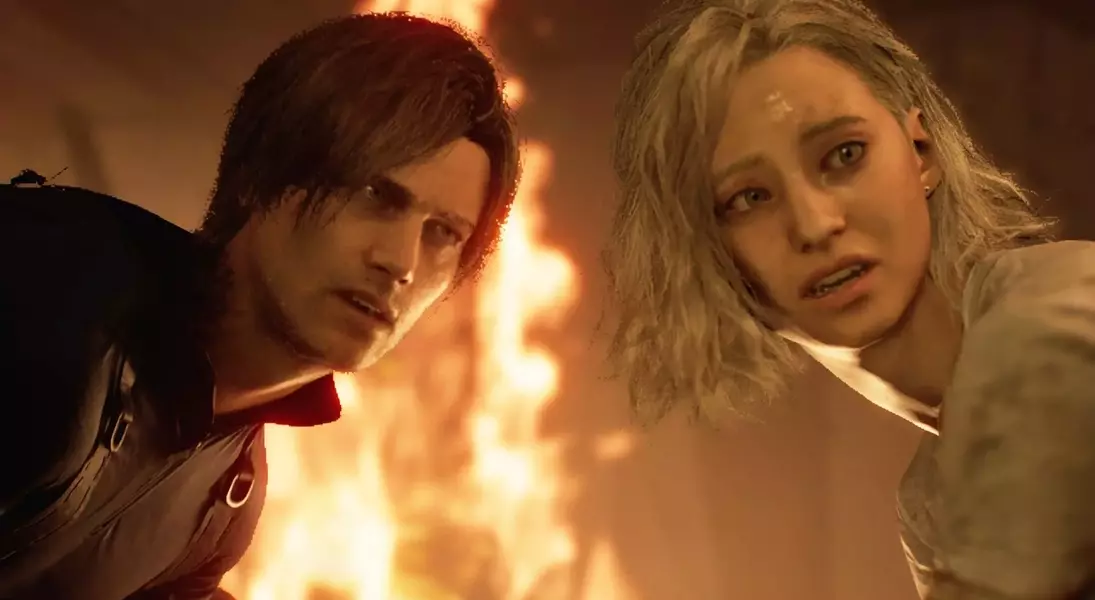

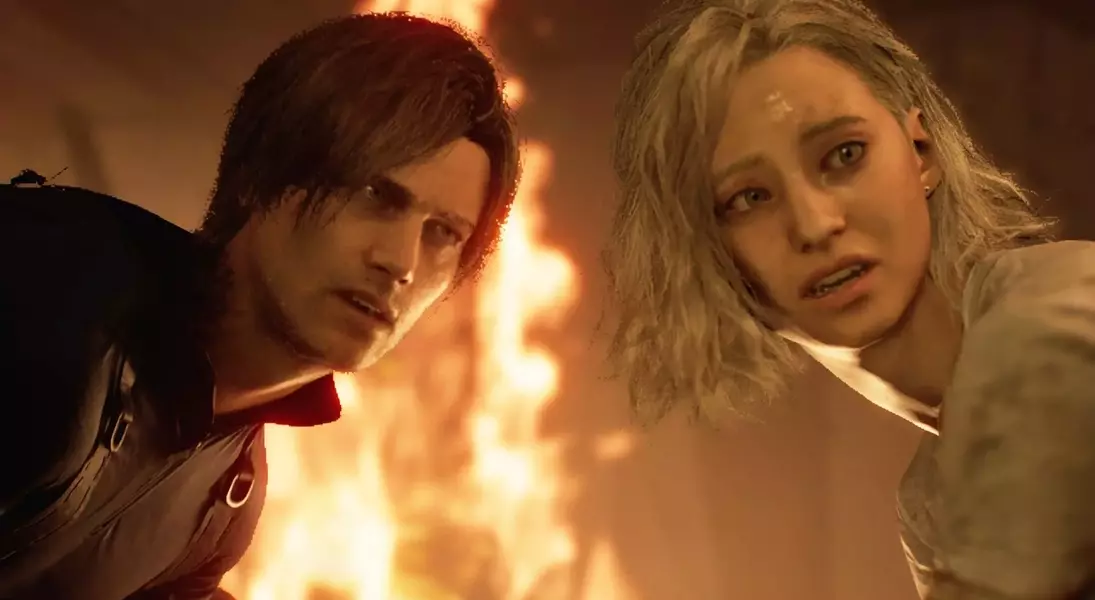

Resident Evil Requiem, a prominent game released in 2026, has garnered attention for its utilization of DirectStorage and GPU data decompression through Nvidia's GDeflate technology. This innovative approach aims to enhance data flow from storage to memory and then to the graphics card's VRAM, ultimately reducing loading times. However, the game's implementation of this feature presents an intriguing mystery: its activation appears to be somewhat arbitrary across various graphics processing units, even those fully equipped to handle GPU decompression.

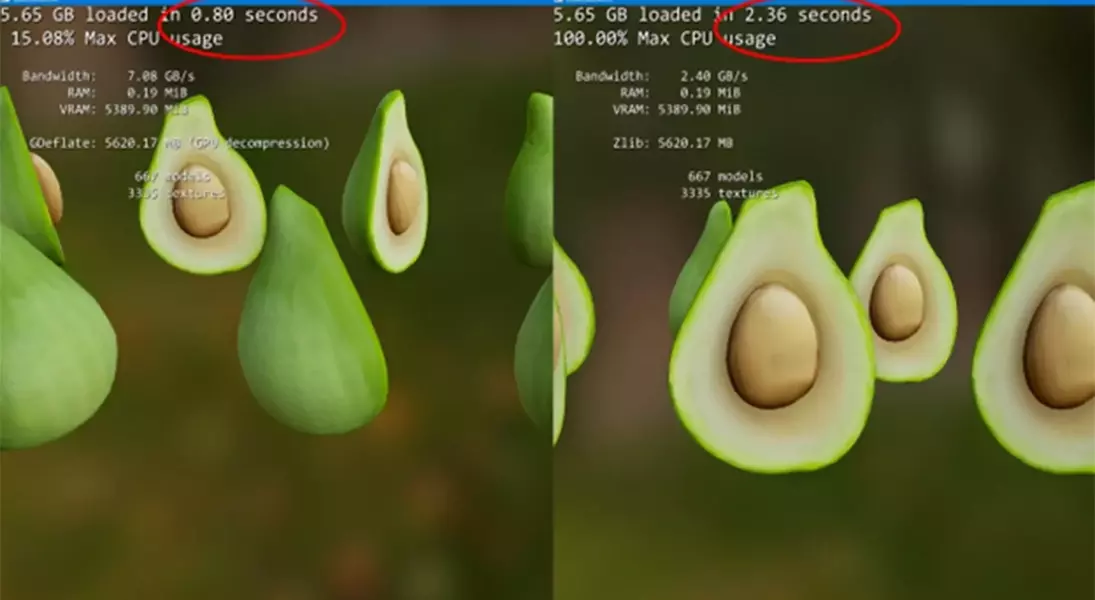

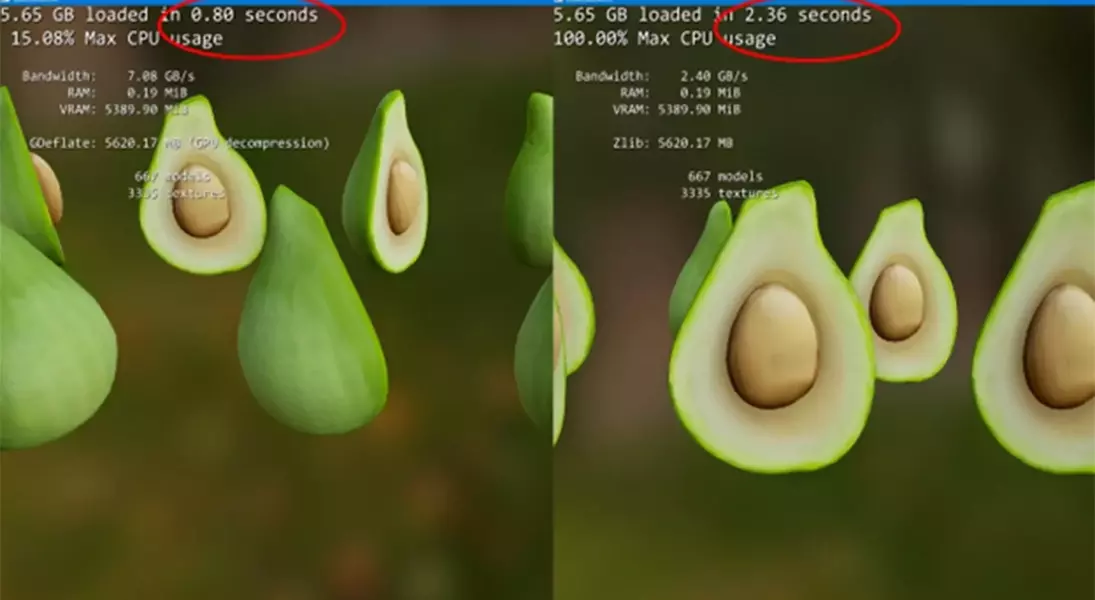

The integration of DirectStorage and GDeflate in Resident Evil Requiem, while a forward-thinking step, has revealed an unexpected inconsistency. Initial observations confirmed the game's DirectStorage capabilities. Yet, deeper analysis using tools like SpecialK, a utility for examining game internals, brought to light a curious pattern: GDeflate's GPU-powered decompression was not uniformly applied. For instance, while high-end GPUs such as the RTX 5090, 5070, and 5060 successfully leveraged GPU decompression, a seemingly capable RTX 4060 laptop reverted to CPU-based decompression. Even more perplexing, a driver reinstallation on an RTX 5090 system surprisingly led to the fallback CPU method being used.

This erratic behavior extends beyond specific hardware. Personal tests conducted on a range of systems, including an RTX 5070, an RTX 4080 Super, and a Radeon RX 7900 XT, all showed the CPU fallback being utilized despite these GPUs possessing the necessary capabilities for hardware decompression. This contrasts with other titles like Ghost of Tsushima, where developers intentionally opt for CPU decompression, prioritizing GPU resources solely for rendering, a decision supported by the increasing efficiency of modern CPUs in handling such tasks. The core question remains: why does Resident Evil Requiem exhibit such unpredictable activation of GPU GDeflate?

The inconsistency in GDeflate's activation within Resident Evil Requiem points to potential issues in the game's code or an insufficiently robust detection mechanism for GPU decompression. Neither driver versions nor the status of Resizable BAR appear to influence this behavior, adding layers to the enigma. While the performance differences between CPU and GPU decompression for GDeflate are reportedly minimal, according to observations, this does not diminish the puzzle of why the game fails to consistently utilize advanced hardware features. The situation highlights the complexities developers face in optimizing for diverse hardware landscapes and the ongoing quest for seamless integration of cutting-edge technologies.