As the GeForce GTX 10-series approaches its tenth anniversary, Nvidia's gaming GPUs have fundamentally transformed, integrating ray tracing and AI to enhance the PC gaming experience. While core elements like compute performance, cache, and VRAM bandwidth remain critical for achieving high frame rates, contemporary GeForce graphics cards are markedly more sophisticated and versatile than their 2016 predecessors.

Considering the evolving landscape and the unlikelihood of a Super refresh for Blackwell chips due to VRAM supply constraints, we delve into a historical analysis of Nvidia's GPU generations. By examining data from 60-class, 70-class, 80-class, and top-tier models (including the 90-class and former Titan series), we aim to project the potential specifications of the forthcoming RTX 60-series. This retrospective approach provides valuable insights into the probable design and performance characteristics of Nvidia’s next-generation gaming graphics processing units.

Nvidia, rather than manufacturing its own GPUs, relies on TSMC (Taiwan Semiconductor Manufacturing Company) for production. TSMC's advanced process nodes are crucial for creating high-performance chips. While Nvidia utilizes TSMC's cutting-edge N3 node for its Rubin AI processors, the Blackwell gaming chips, which will power the RTX 50-series graphics cards, are built on a customized version of the N5 node, designated as 4N. The RTX 40-series GPUs also leveraged this 4N node, whereas the 30-series employed Samsung's 8LPH technology.

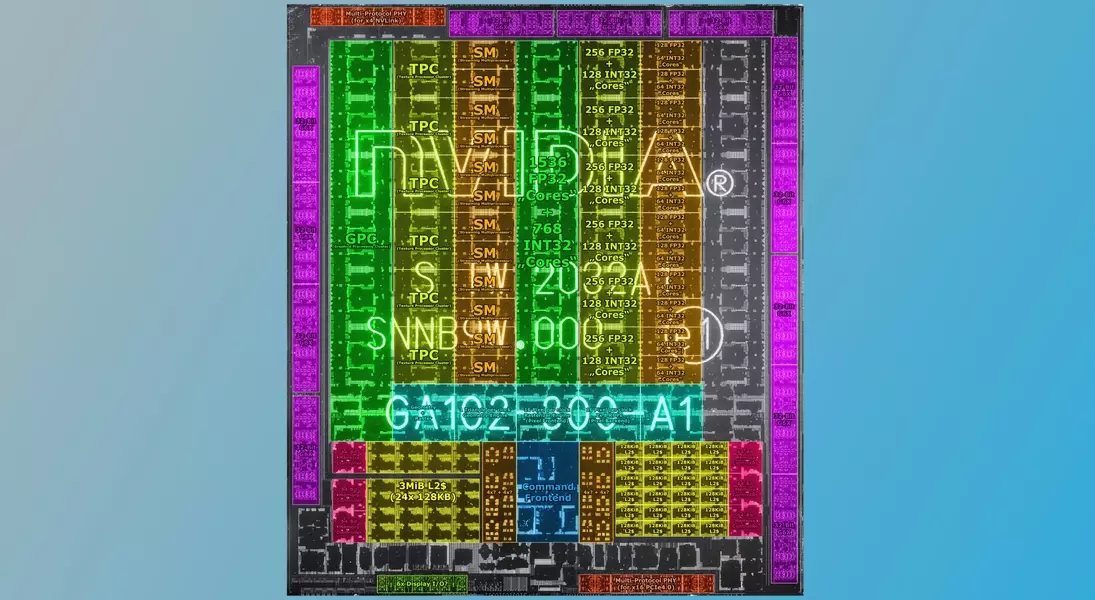

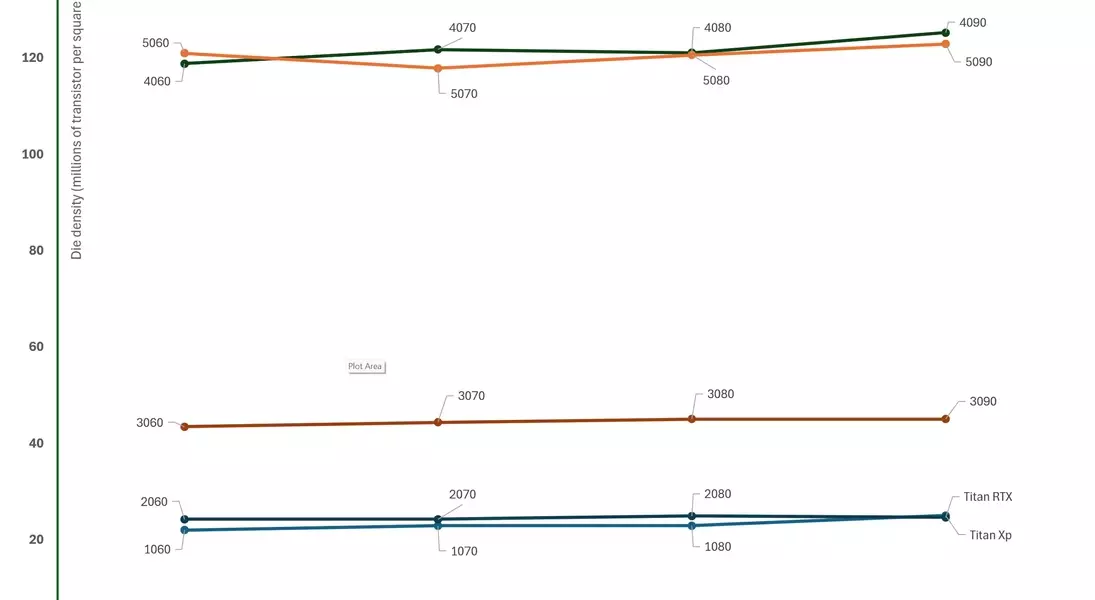

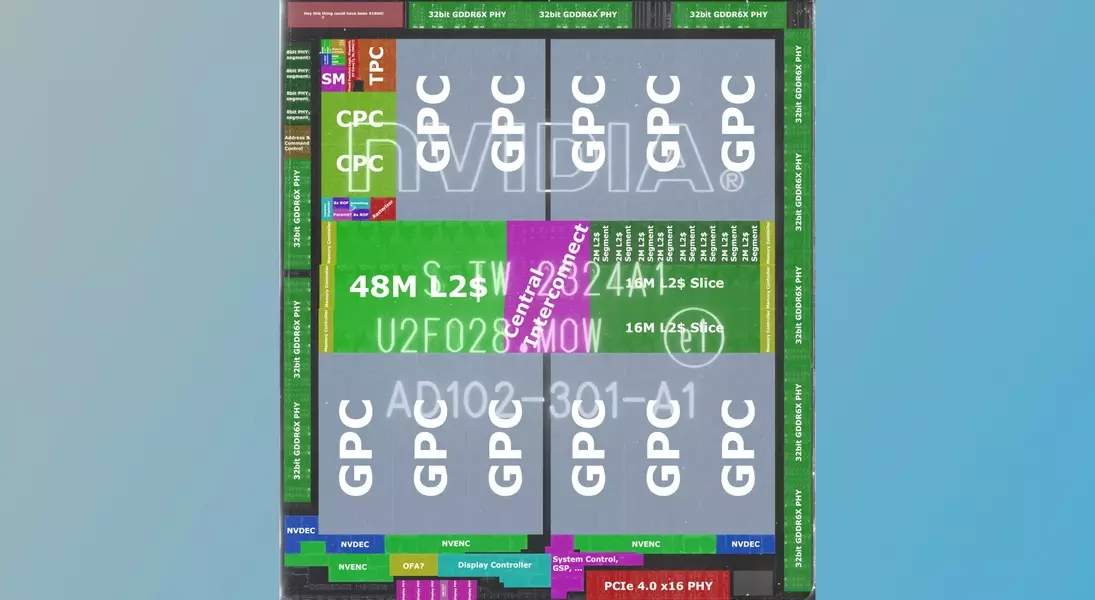

This reliance on external foundries and specific process nodes is a key factor in predicting the future of RTX 60-series chips. Given Nvidia’s significant investment in AI, it is anticipated that they will opt for TSMC's N3 node, not the latest N2, primarily due to cost considerations. This choice directly influences the die density—the number of transistors per square millimeter—of these future GPUs. Current Blackwell and Ada Lovelace gaming chips exhibit a density of approximately 120 million transistors/mm². If the RTX 60-series adopts TSMC’s N3 node, a substantial increase in density, potentially around 66%, could be expected.

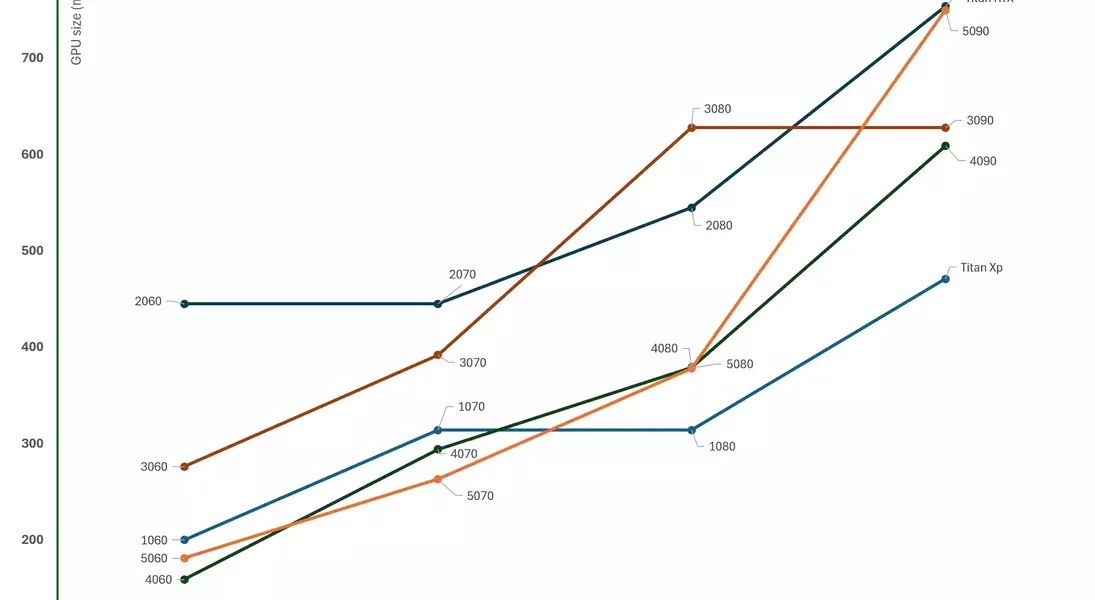

However, this increased density primarily applies to logic components like shader cores. The density improvements for other critical GPU elements such as cache and PCIe/VRAM circuitry are considerably smaller, peaking at around 5%. This implies that while Nvidia can integrate a significantly higher number of CUDA cores, its options for enhancing cache and analog systems are more restricted. Historically, Nvidia has favored smaller dies for most of its gaming products to optimize wafer yields and profit margins, with the exception of its ultra-high-end GPUs like the RTX 5090, which are designed to meet the demands of the prosumer AI market.

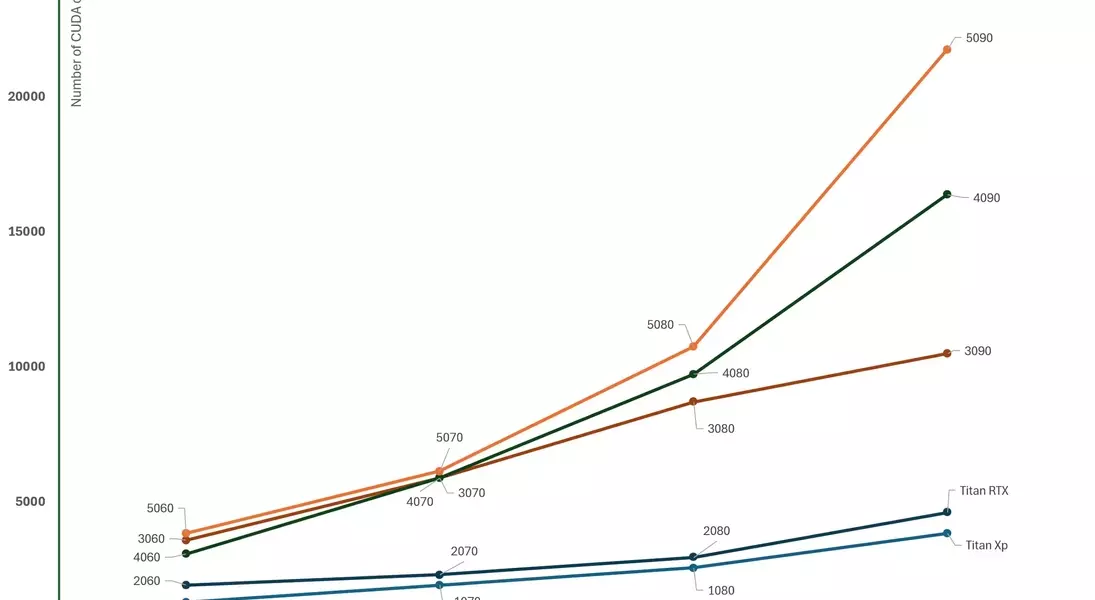

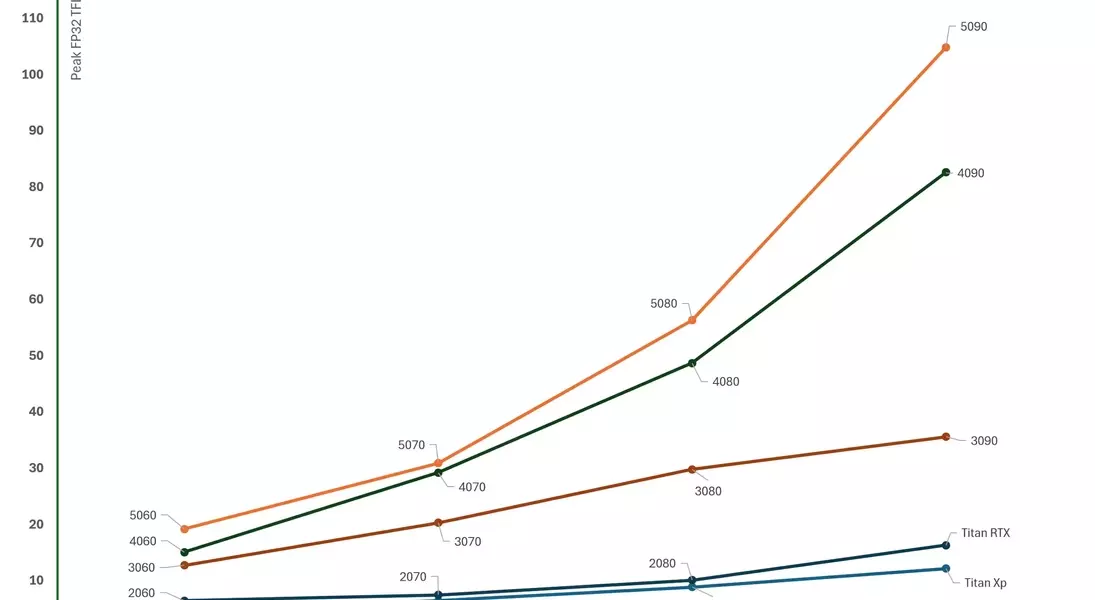

Considering these factors, it is probable that the RTX 60-series will feature GPUs with 60-70% more transistors than the current Blackwell chips, while maintaining similar die sizes. The strategic allocation of this increased transistor budget will be crucial. While an increase in CUDA cores is expected, historical patterns suggest that this increase may not be as dramatic as the jump in die density. For instance, the transition from Turing to Ampere GPUs saw an 81% increase in average density but a 180% increase in CUDA cores per die size. Conversely, Ada Lovelace GPUs, despite being 173% denser than Ampere, only exhibited a 57% increase in shader units per die size.

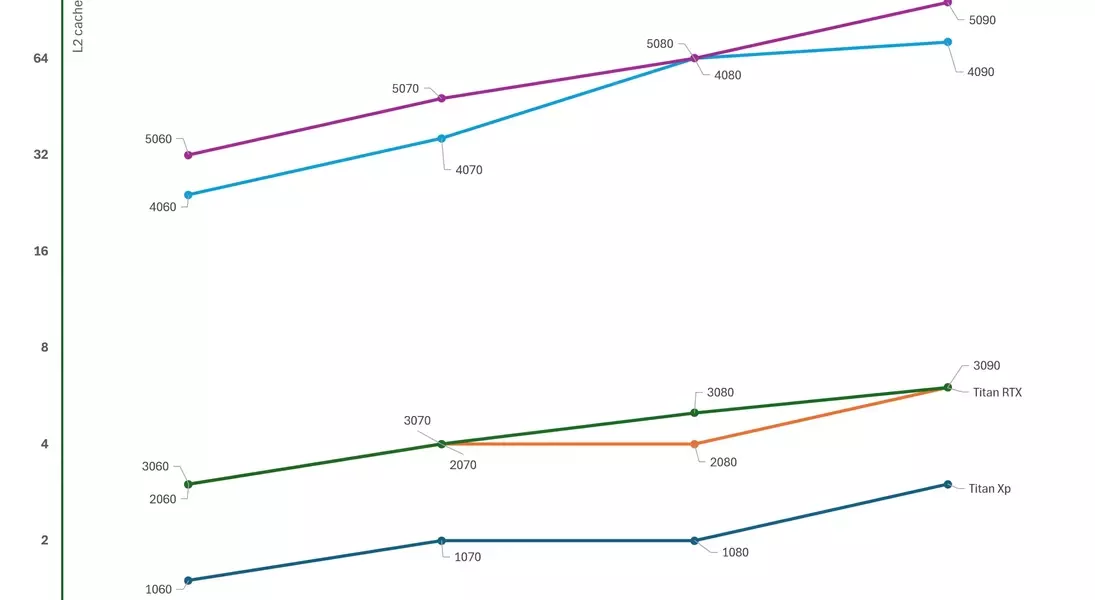

Nvidia's approach to L2 cache has also evolved significantly. Earlier Pascal, Turing, and Ampere GPUs had limited L2 cache, typically a few megabytes. However, mirroring AMD's RDNA 2 strategy, Nvidia dramatically increased the last-level cache in its RTX 40/50-series. This substantial increase in SRAM helps alleviate VRAM bandwidth pressures and boosts overall compute and ray tracing performance. Since SRAM scaling is inefficient with process node shrinks, future RTX 60-series consumer GPUs are unlikely to feature significantly more L2 cache than the Blackwell series, possibly only a slight increase for higher-end models.

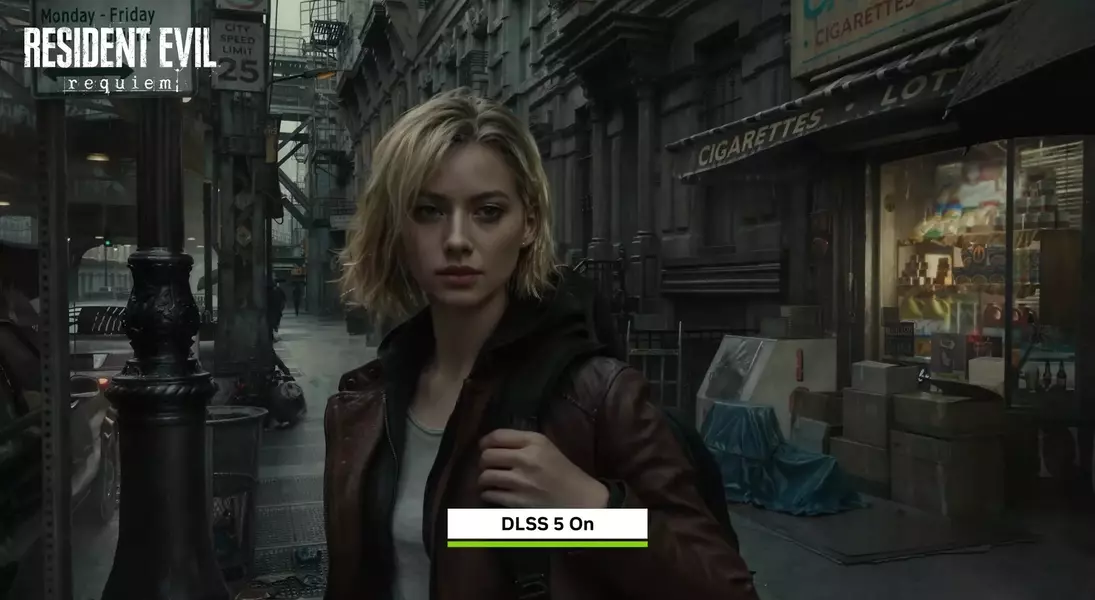

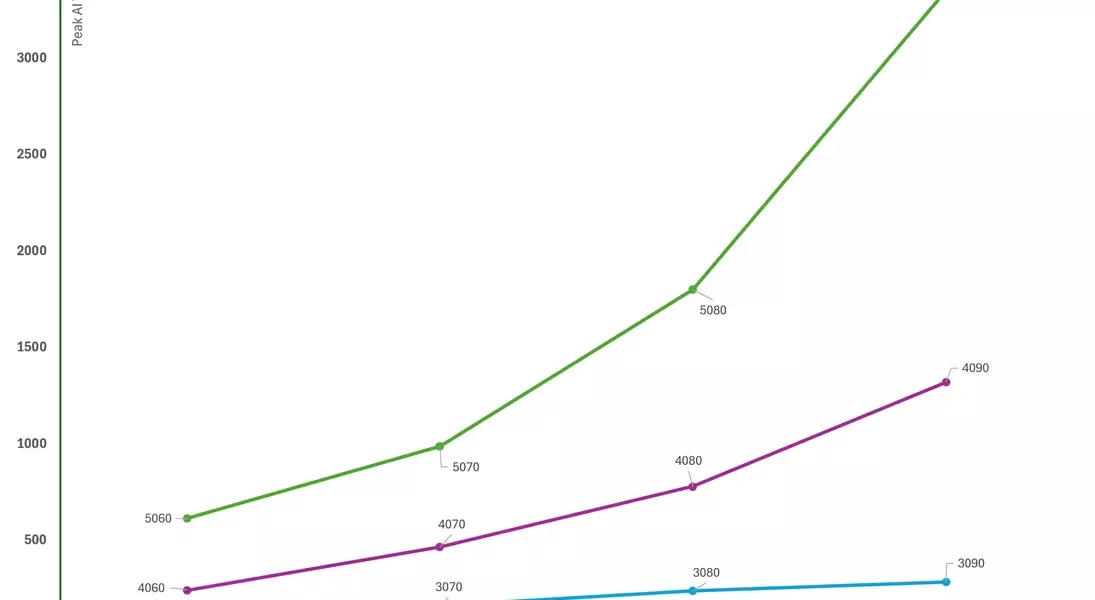

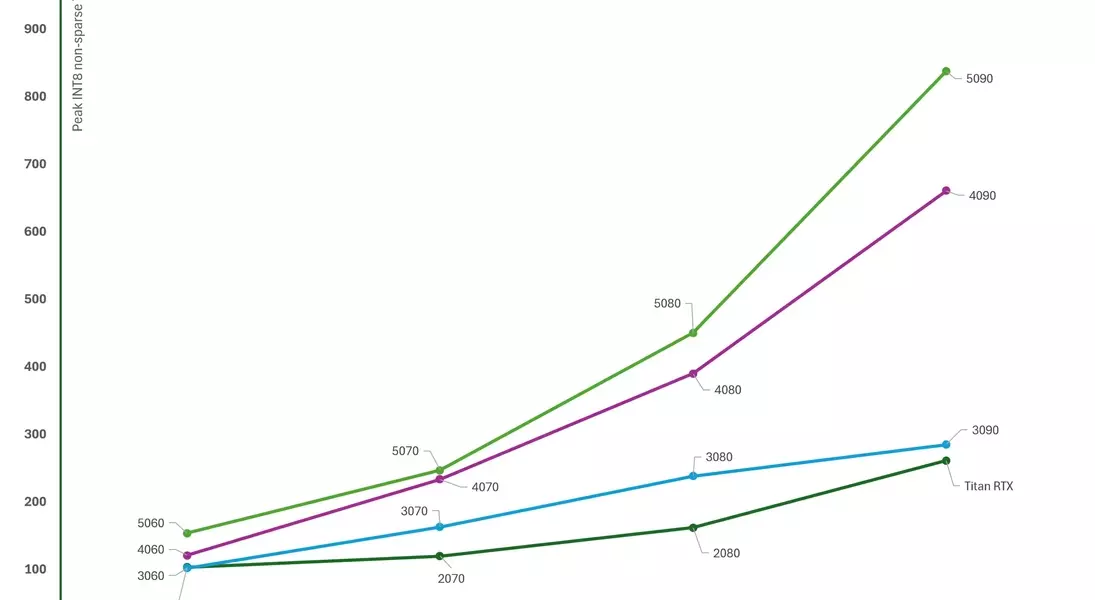

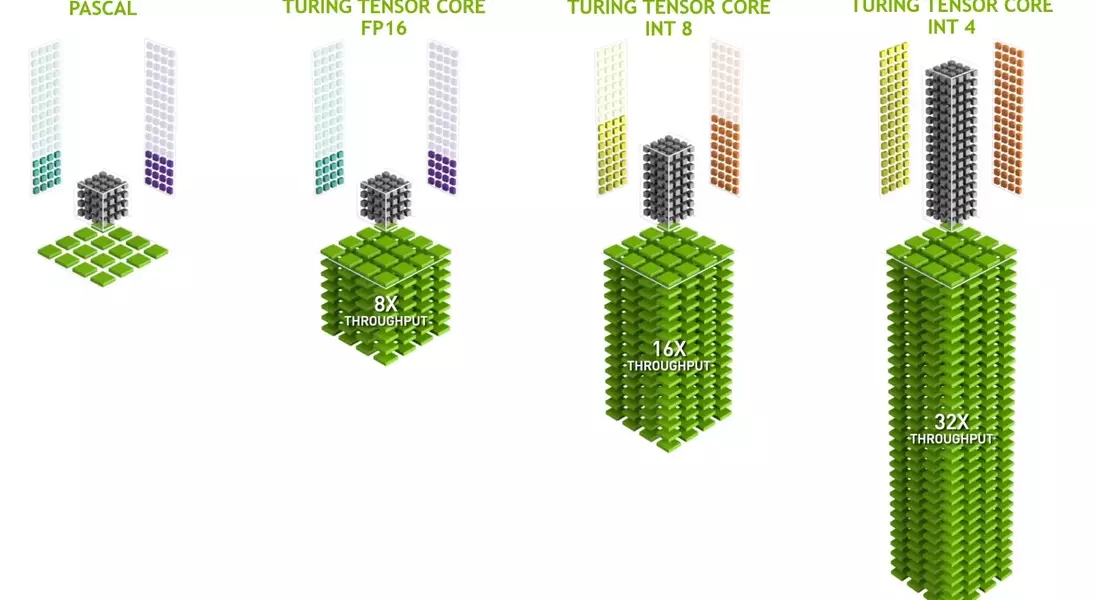

The efficiency of Tensor and Ray Tracing cores has also seen considerable advancements. Nvidia's 'AI TOPS' figures, measuring the peak throughput of Tensor cores, show a misleadingly rapid increase due to generational improvements in operations per second and expanded data format support. When normalized to a consistent INT8 format, the progression is more moderate, indicating that while Tensor core numbers may increase with die density, their operational capabilities per cycle might not see drastic improvements. Similarly, while RT cores have become more potent, they are not expected to occupy significantly more die space relative to the Streaming Multiprocessor (SM) structure. Nvidia might implement dedicated cache solutions for Tensor cores, similar to those in its AI data center Blackwell chips, to support more demanding DLSS applications.

Forecasting the specifications of the RTX 60-series involves balancing historical trends with current technological constraints and market dynamics. The primary models—RTX 6090, 6080, 6070, and 6060—are projected to maintain similar die sizes to the Blackwell series, but with potentially 30-50% more CUDA cores. VRAM capacity could increase with the adoption of 3GB GDDR7 modules, although aggregated memory bus widths are likely to remain consistent to optimize profit margins amid high memory prices. Clock speeds, which saw significant boosts in the RTX 40 and 50-series, might not experience similar leaps with TSMC’s N3 node. Therefore, the RTX 60-series could offer a 25-50% increase in compute performance compared to the 50-series.

Nvidia's strategic focus will likely be on pushing the RTX 6090 for the AI prosumer market, while maintaining competitive advantages in other segments where competition is less fierce. The company cannot solely rely on advancements like DLSS to drive performance, as future iterations may focus more on graphical fidelity rather than raw frame rate boosts. Ultimately, a holistic improvement across CUDA count, cache, Tensor core performance, and data bandwidth is required to advance neural rendering and path tracing for the gaming masses. However, profit targets, process node costs, GDDR7 supply, and the competitive landscape will all influence the final specifications. The precise details will emerge as leaks and rumors surface, providing a clearer picture of Nvidia's next-generation offerings.