Unlocking Global Conversations: The Future of Content Engagement

Meta's Vision for Cross-Lingual Content

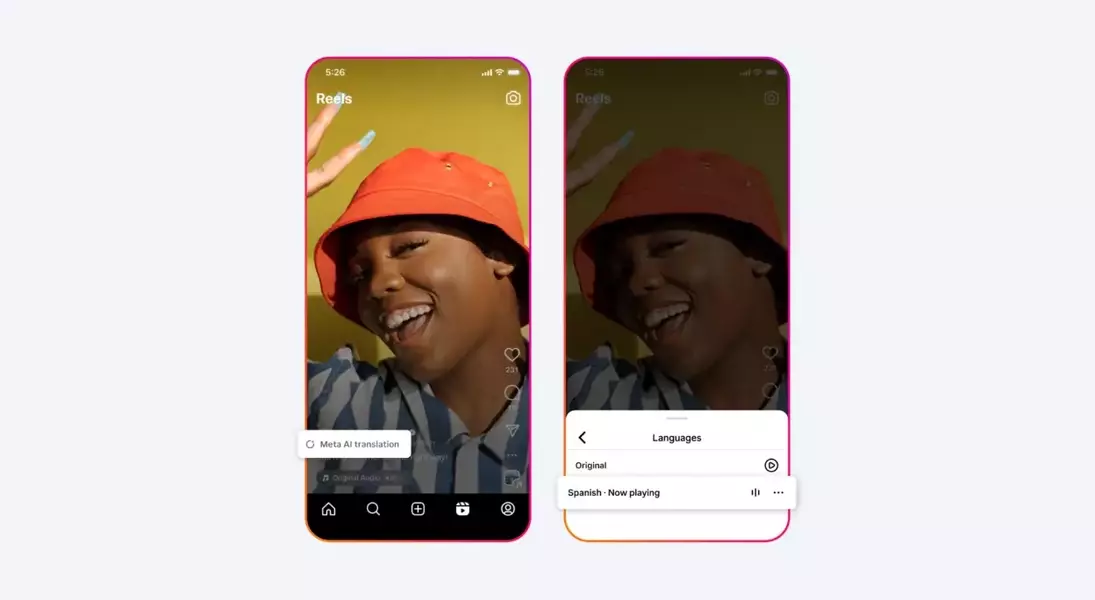

Meta is set to integrate its sophisticated AI voice translation technology into Instagram Reels and Facebook, marking a significant advancement in digital communication. This new capability will allow videos to be translated in real-time, with the spoken words appearing to match the speaker's lip movements, creating a remarkably natural viewing experience for audiences in different linguistic backgrounds. Initially, the feature will support translations between Spanish and English, with plans for expanding to more languages in the future.

Bridging Divides: Aims and Ambitions

According to Adam Mosseri, head of Instagram, the primary objective of this new translation tool is to empower creators to connect with a broader, international audience. By enabling content to transcend linguistic and cultural boundaries, Meta aims to help creators expand their reach, cultivate a larger following, and derive greater value from their presence on Instagram. The vision is to foster a more interconnected platform where language is no longer a barrier to engagement.

The Uncanny Reality of AI-Powered Speech

Early demonstrations of the AI translation tool have showcased its remarkable ability to synchronize translated audio with original video, creating a highly convincing effect. Mosseri's own demonstration, where his English speech was seamlessly translated into Spanish with perfectly matched lip movements, highlighted the technology's impressive fidelity. This level of integration suggests a future where language differences in video content could become virtually imperceptible to the viewer.

A Landscape of AI Translation Innovation

Meta is not alone in the pursuit of advanced AI translation. Competitors such as Google Gemini, OpenAI's ChatGPT, and numerous specialized translation firms are actively developing similar technologies. Recent announcements from Google, detailing live translation for phone calls on its Pixel 10 series, and Apple's rumored real-time translation features for iOS 26, underscore a broader industry trend towards ubiquitous AI-driven language solutions. While TikTok primarily relies on text-based caption translations, the move towards real-time voice and lip-syncing translation represents a new frontier.

User Reactions and Lingering Questions

Despite the technological marvel, Meta's live AI translation in Reels has elicited mixed reactions from users. Concerns have been voiced regarding the accuracy of machine translations, the potential for cultural nuances to be lost or misrepresented, and the overall "jarring" feeling of altered voices. Many users have suggested that AI-generated subtitles might be a less intrusive and more reliable alternative. These discussions highlight the delicate balance between technological innovation and user comfort, as platforms navigate the complexities of AI integration in highly personal communication spaces.