Illinois has emerged as a frontrunner in artificial intelligence governance, specifically within the realm of mental health. A newly signed legislative act, known as the Wellness and Oversight for Psychological Resources Act, establishes a clear boundary: AI systems are barred from independently functioning as therapists. Instead, the law meticulously defines how human mental health practitioners can ethically and responsibly incorporate AI tools into their practice. This proactive measure reflects a growing awareness of the potential benefits and significant risks associated with artificial intelligence in sensitive fields like psychological care, seeking to prioritize human oversight and patient well-being.

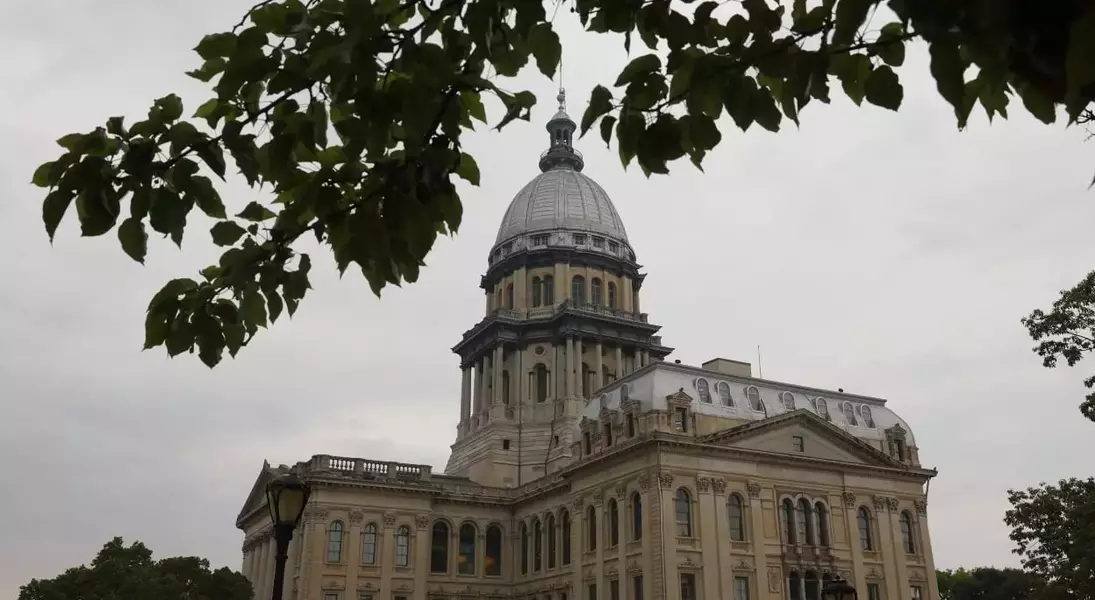

On August 1st, Governor JB Pritzker officially signed this pivotal bill into law, marking a significant moment in the evolving landscape of AI ethics and public safety. The legislation, championed by Representative Bob Morgan, underscores the fundamental principle that only individuals holding valid licenses are authorized to provide therapeutic or psychotherapeutic services. This directive directly addresses concerns raised by numerous reported incidents where AI platforms, lacking human empathy and clinical judgment, have offered misguided or even detrimental advice to vulnerable individuals seeking mental health support.

Representative Morgan emphasized the critical necessity of these guardrails, citing alarming instances where AI's unsupervised involvement in mental health care led to adverse outcomes, including encouragement of dangerous behaviors. He articulated the state's resolve to halt the unrestrained expansion of AI in this sensitive sector, asserting that such regulations are indispensable to prevent further harm. The Wellness and Oversight for Psychological Resources Act, or HB 1806, serves as a decisive action to ensure that technological advancements do not compromise the integrity and safety of mental health treatment.

Under the provisions of this new statute, mental health providers are expressly forbidden from allowing AI to make autonomous therapeutic decisions, engage directly with clients in a therapeutic capacity, or formulate treatment plans without direct review and approval from a licensed professional. Furthermore, the law effectively closes a previous loophole that enabled unqualified individuals to misrepresent themselves as therapists, thereby enhancing patient protection and professional accountability.

Violations of this legislation carry substantial penalties, with fines potentially reaching up to $10,000 for each offense, and escalating based on the severity of the transgression. The immediate implementation of this law solidifies Illinois' position as the first state to comprehensively regulate AI's role in mental healthcare. This initiative aligns with other recent legislative changes within Illinois, such as amendments to the state’s Human Rights Act, which now categorizes the discriminatory use of AI against employees as a civil rights violation, and mandates employee notification when AI tools are deployed in the workplace.

The integration of AI into mental health services remains a subject of intense debate. While proponents point to the potential for AI to broaden access to care, particularly given the high costs and limited availability of traditional therapy, critics, including mental health experts, researchers, and prominent figures like OpenAI CEO Sam Altman, highlight serious dangers. These concerns range from significant privacy breaches to the risk of AI-driven interactions exacerbating existing mental health crises, especially when individuals mistakenly perceive chatbots as legitimate professional care. The ongoing discussion revolves around balancing accessibility with safety, ensuring that technological innovation serves humanity responsibly.

Ultimately, by delineating the permissible and impermissible applications of AI in mental health, Illinois is proactively safeguarding individuals, upholding ethical standards for providers, and ensuring that therapeutic interventions remain firmly within the purview of qualified, licensed professionals. This legislative endeavor reflects a deep commitment to maintaining the human element at the core of mental health support, while cautiously exploring the supportive potential of artificial intelligence.