In a potential game-changer for the artificial intelligence landscape and the broader memory market, Google has unveiled a groundbreaking compression algorithm named TurboQuant. This innovation promises to dramatically reduce the memory footprint required by AI models, claiming a staggering six-fold decrease without compromising accuracy. Such a development could alleviate the current "RAMpocalypse" of escalating memory prices driven by AI demand, yet simultaneously pose a challenge to major memory manufacturers as the market dynamics shift.

Google's TurboQuant Algorithm Poised to Reshape AI Memory Landscape

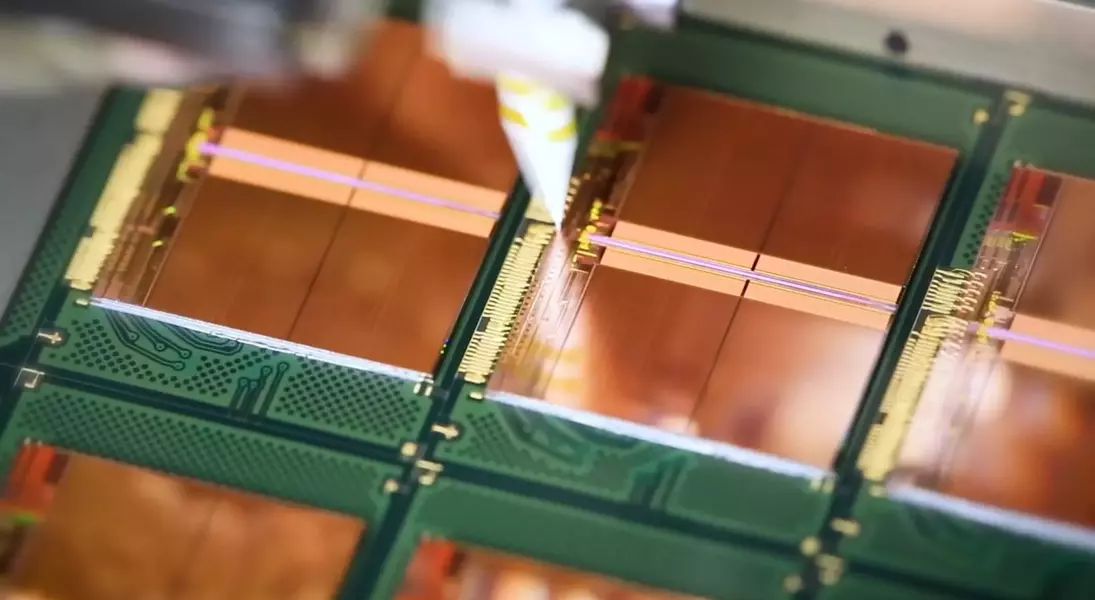

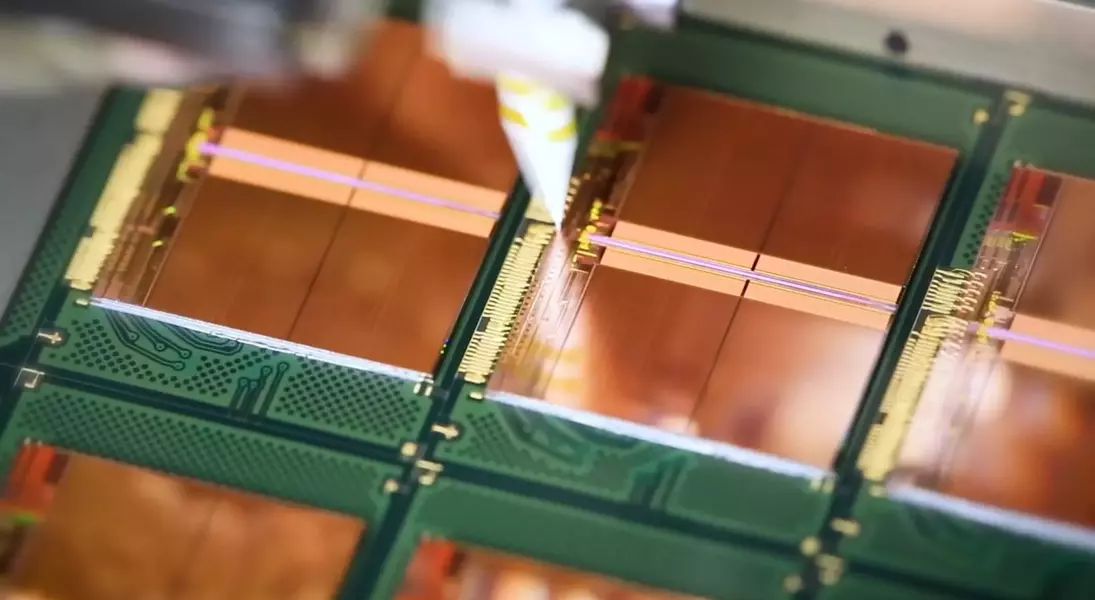

In recent days, Google's research division has introduced an innovative compression algorithm, TurboQuant, designed to significantly optimize memory usage in artificial intelligence applications. The company asserts that this new technology can curtail AI memory requirements by up to six times while maintaining perfect accuracy in its operations. This advancement addresses a critical bottleneck in AI development, where the insatiable demand for high-capacity memory has led to surging prices and supply constraints, often referred to as the 'RAMpocalypse.' The core principle behind TurboQuant, simplifying complex mathematical details, involves a shift from conventional vector calculations to a more absolute, polar coordinate-based reference system. Instead of representing data through standard X, Y, Z coordinates, the algorithm converts vectors into polar coordinates, akin to describing a location as a distance and an angle rather than a series of directional movements. This methodology eliminates the need for data normalization, a process that typically incurs significant memory overhead in traditional vector compression. Google's internal benchmarks reportedly confirm TurboQuant's transformative potential, demonstrating impeccable performance across various tests with a remarkable reduction in memory size. This profound improvement, if validated and widely adopted, carries significant implications for the AI server market. Industry analysts have already observed a noticeable impact on memory manufacturers' stock prices. For instance, in the days following the announcement, share values for key players like Samsung, SK Hynix, and Micron experienced declines of approximately 8%, 11%, and 10% respectively, even after factoring in minor daily rebounds. This market reaction suggests that investors anticipate a reduced demand for physical memory modules from these companies as AI firms may require less hardware to run their increasingly sophisticated models. The potential ripple effect could extend to the consumer market, where a decrease in AI-driven demand might free up supply and lead to more affordable RAM for personal computers, laptops, and other electronic devices.

This development underscores a fascinating divergence between the interests of memory producers and end-users. While a scarcity-driven market has historically benefited memory manufacturers with higher profits, a technology that drastically reduces demand could reverse this trend, making memory more accessible and affordable for the average consumer. However, the future remains uncertain; established memory providers, such as Micron, have previously indicated that demand far outstrips their available supply for the foreseeable future. It is plausible that any 'freed-up' memory production might simply be reallocated to support ever-larger AI models, rather than finding its way into consumer PCs. Despite these uncertainties, the introduction of TurboQuant offers a glimmer of hope for an eventual easing of memory prices, a prospect that many in the tech community eagerly anticipate.