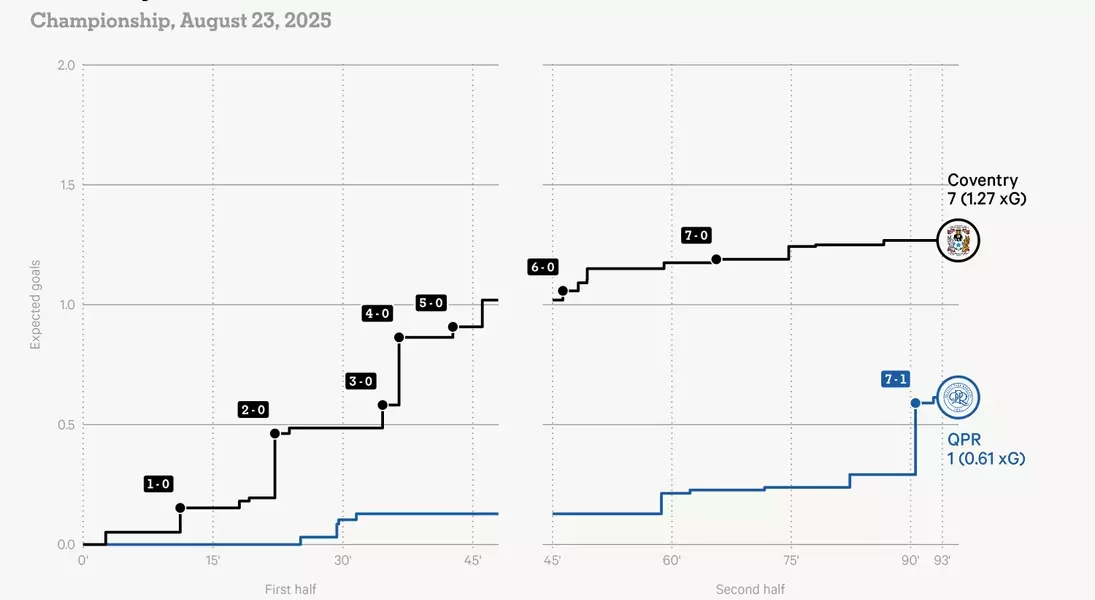

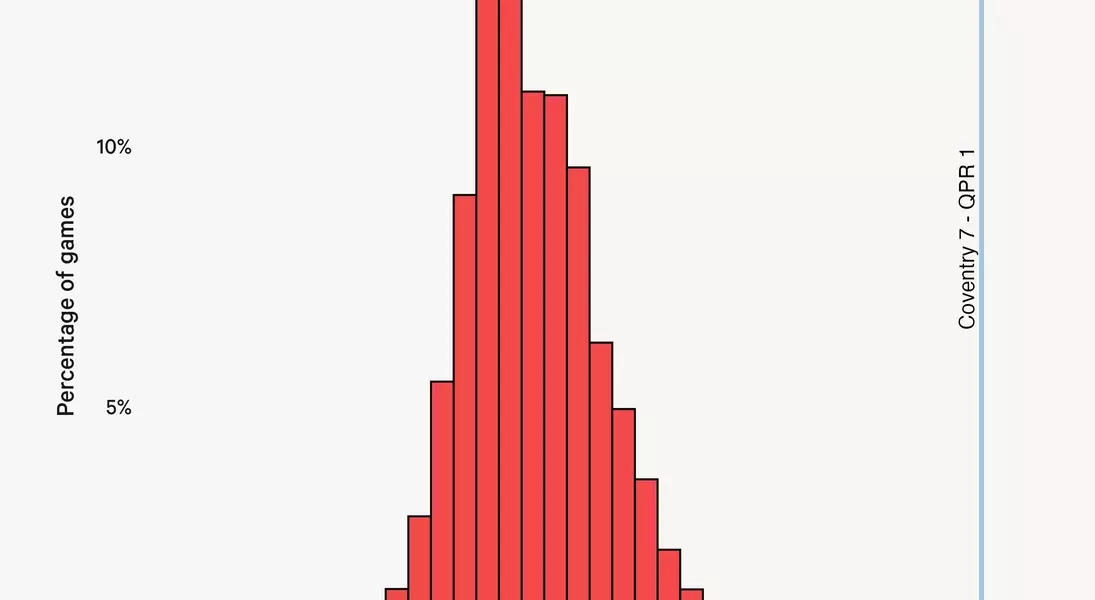

A recent football encounter involving Coventry City and Queens Park Rangers, which saw Coventry secure a resounding 7-1 victory, has ignited a fresh discussion about the validity and limitations of expected goals (xG) in football analytics. Despite the lop-sided score, Coventry's expected goals stood at a surprisingly low 1.27, showcasing a remarkable statistical outlier where actual goals vastly surpassed predicted probabilities. This event underscores that while xG models offer valuable insights into shot quality and offensive efficiency over time, they are susceptible to the inherent unpredictability and randomness of individual matches, where extraordinary finishing can defy statistical expectations. This outcome prompts a deeper examination into how such significant discrepancies occur and what they reveal about the interaction between advanced metrics and on-field reality.

On a memorable Saturday, amidst numerous football fixtures across England's top four divisions, the match between Coventry City and Queens Park Rangers delivered a truly historic moment. Under the guidance of former Chelsea standout Frank Lampard, Coventry City quickly established a commanding 7-0 lead against QPR within the first 66 minutes, putting them on the verge of setting a new record for the largest winning margin in the Championship.

Ultimately, QPR managed to salvage a small measure of dignity by preventing further goals and securing a late consolation. However, it was the statistical data released after the match that truly captured attention. Coventry's impressive tally of seven goals was achieved from an expected goals (xG) value of just 1.27. This metric, as defined by Opta, assesses the quality of a scoring opportunity by calculating the likelihood of a goal based on historical data from similar shots.

This significant disparity between the actual score and the expected goals value provides strong evidence for those who question the reliability of xG models, often citing such instances as proof of their flaws. Yet, a closer inspection reveals a more intricate explanation for this apparent breakdown in the model's predictive power. The Athletic has delved into the specifics of this extraordinary scoring spree, analyzing the individual shots and contextual factors that contributed to such an improbable outcome.

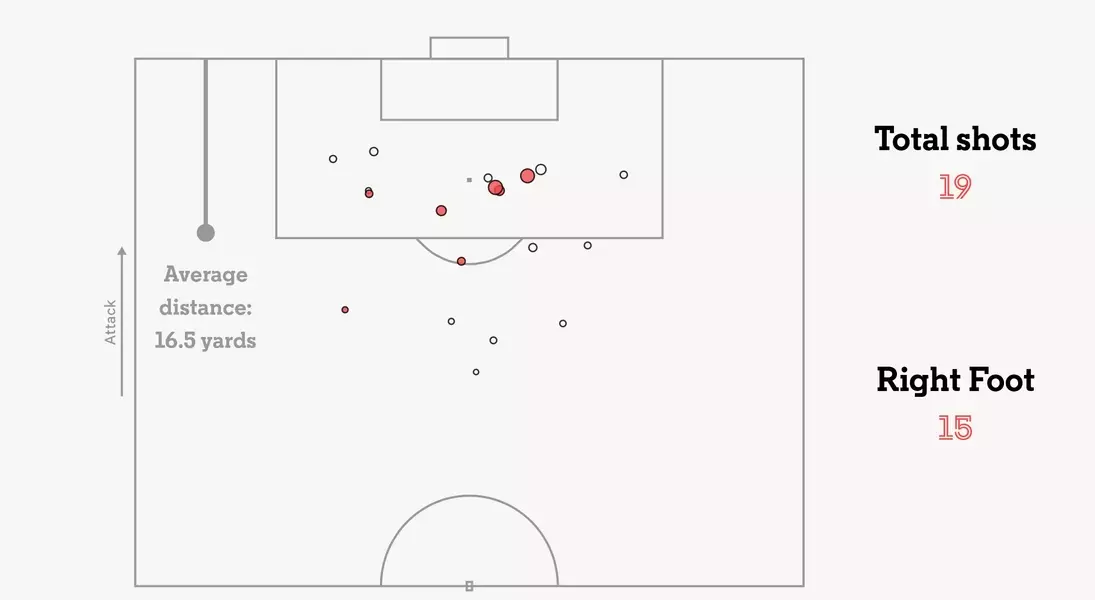

Examining the game's extraordinary goal-scoring performance requires a detailed look at the quality of Coventry's finishing. Was it simply a case of exceptional accuracy and power? A review of the shot map confirms that a significant number of goals arose from highly speculative attempts, with two goals originating from outside the penalty area. The average xG per shot for Coventry was a mere 0.07, indicating that most of their attempts were of low probability.

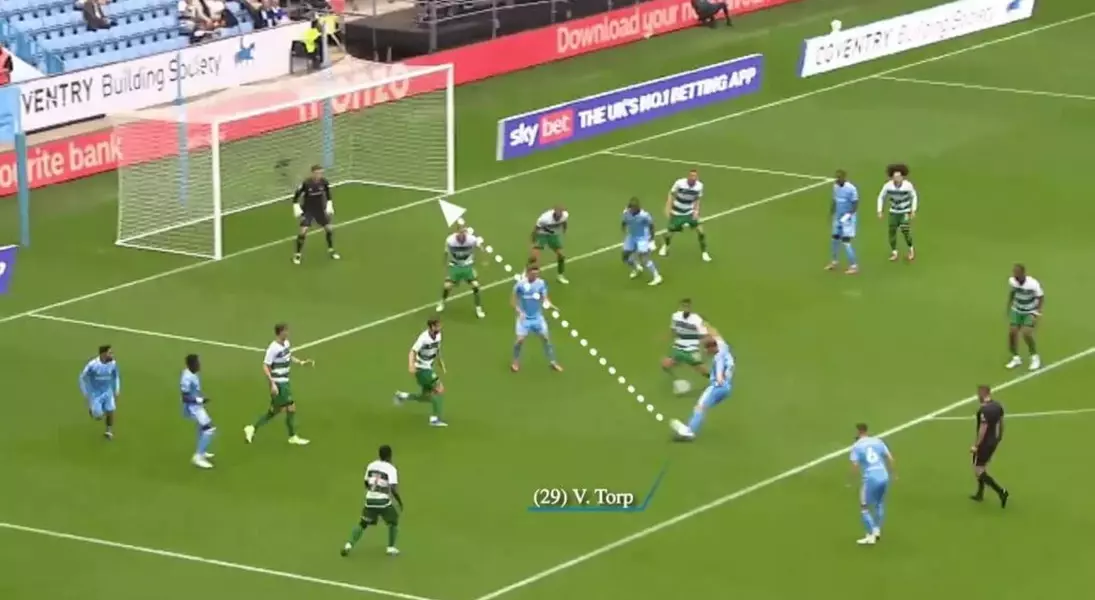

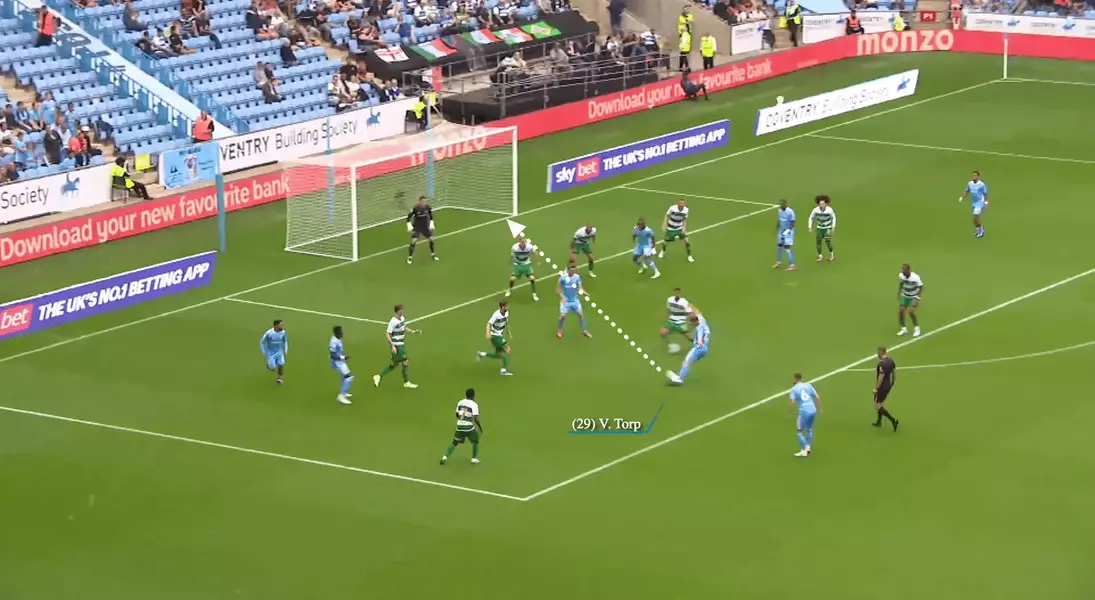

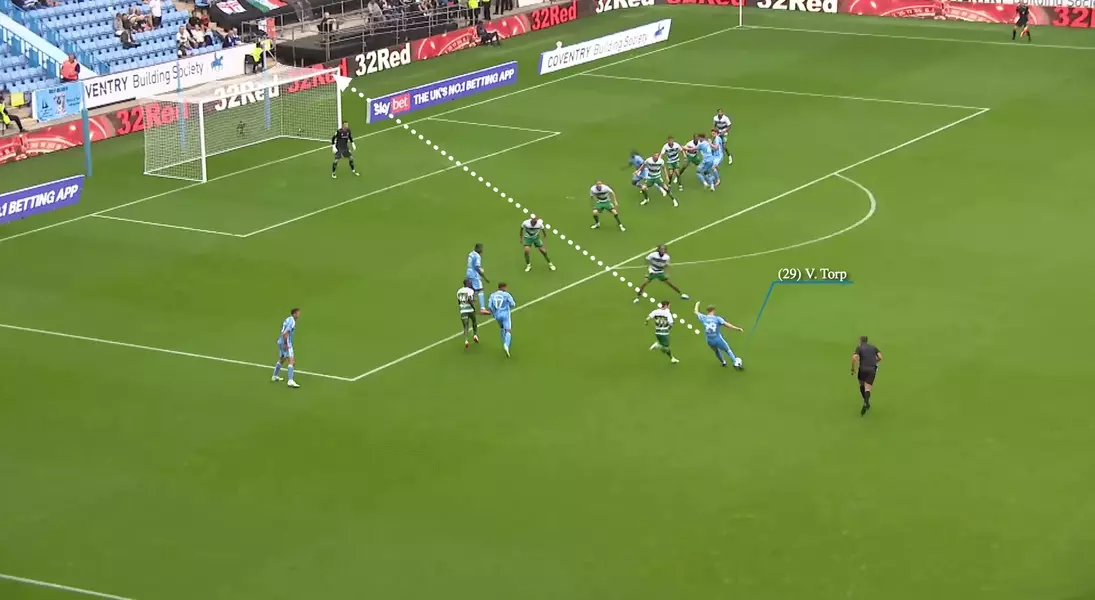

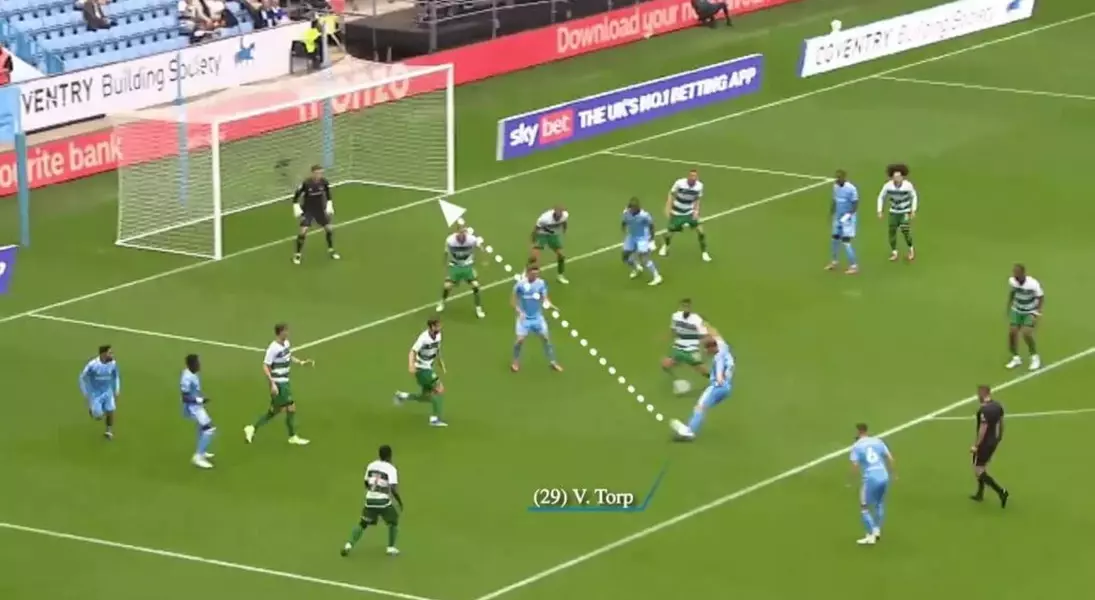

Consider Victor Torp's two goals for Coventry. His initial strike, with an xG of only 0.04, involved expertly guiding the ball through a congested area into the net, a difficult feat likely to succeed only once in twenty attempts given the precision required. His second goal was even more improbable: a powerful, curling shot into the top corner, assessed by the model as having only a one percent chance of success. While these individual efforts were exceptional, the cumulative effect of so many low-probability shots finding the net in a single game is exceedingly rare. Statistical analysis of Coventry's 19 shots places the probability of scoring seven or more goals at an astonishingly low 0.004 percent, or roughly 1 in 25,000.

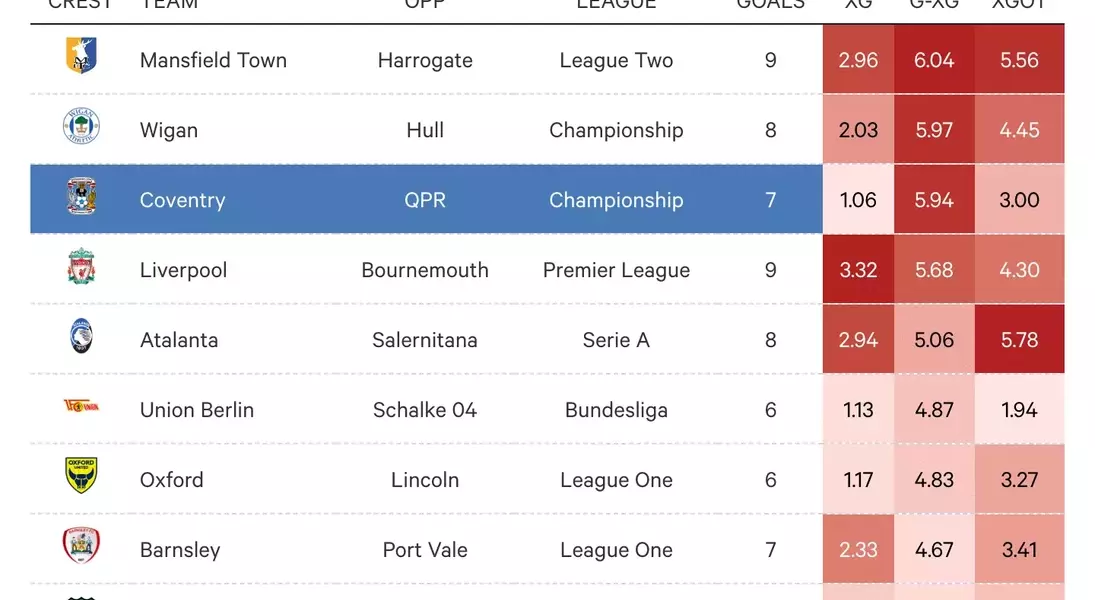

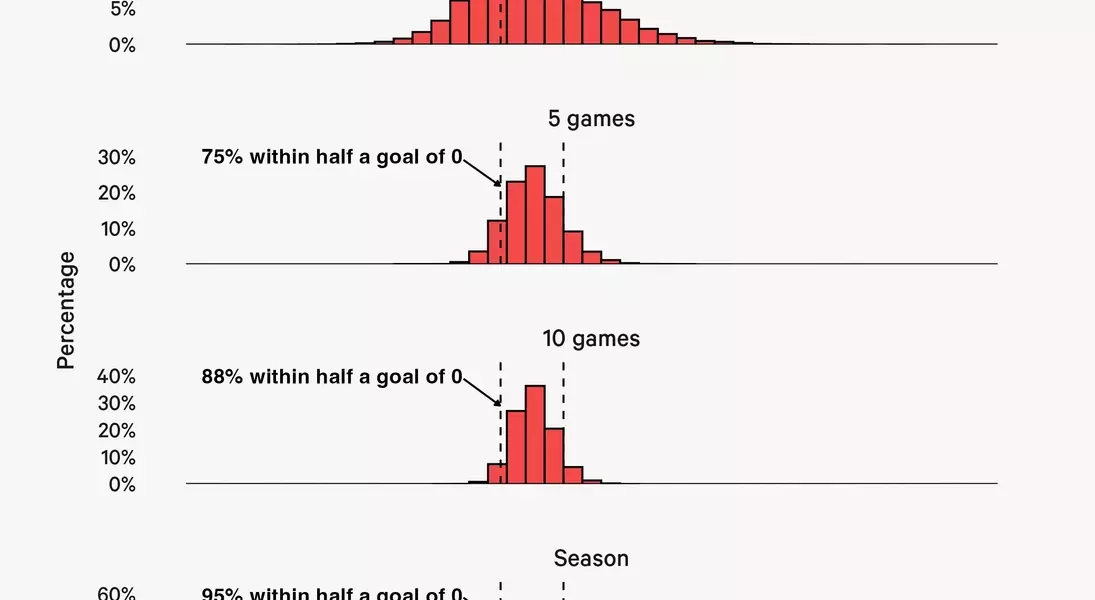

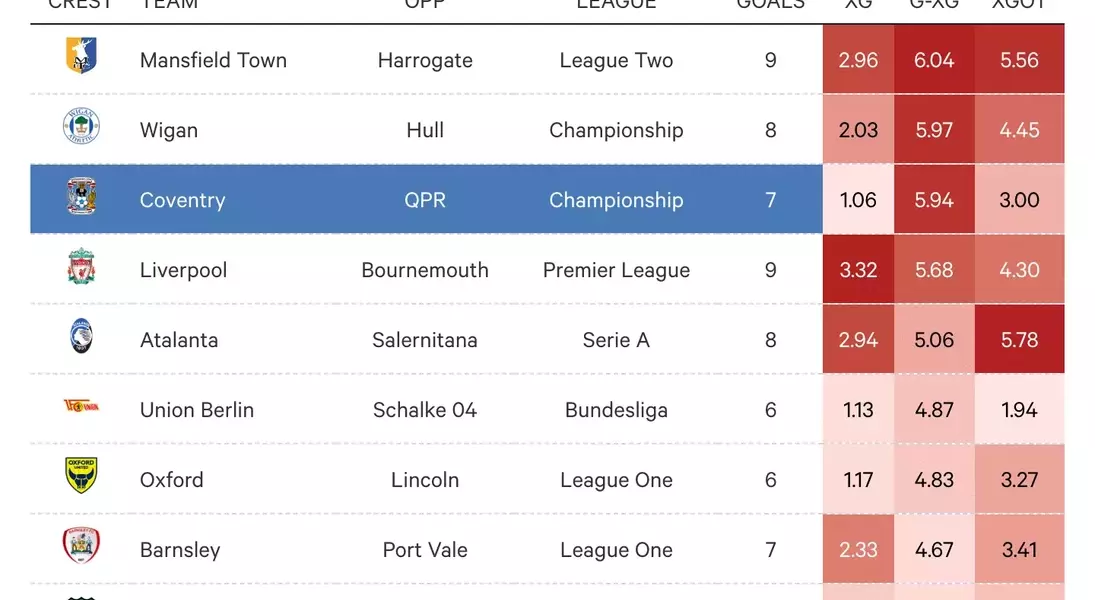

This extreme unlikelihood is corroborated by historical data. Across 18,631 matches in Europe's top leagues since the 2019-20 season, only two games have shown a greater xG overperformance: Wigan's 8-0 win over Hull in 2020 and Mansfield's 9-2 victory against Harrogate Town in 2024. Such occurrences represent extreme outliers within predictive models. While xG performance typically fluctuates around zero, the significant variance in single-match data highlights the inherent noise. In 59 percent of matches, there's at least a half-goal difference between actual and expected goals, indicating the high degree of randomness in individual games, where unforeseen events like defensive errors or fortunate deflections can significantly impact outcomes, making such extreme scorelines, though improbable, entirely possible.

Over longer periods, this statistical noise tends to dissipate. It is highly improbable that Coventry will maintain such a high scoring rate consistently. As more games are played, the average gap between a team's actual goals and their expected goals typically narrows. While a team might exceed their xG by a goal or more in a single match, sustaining that average over an entire season is incredibly difficult. For instance, Borussia Dortmund in 2019-20, one of the closest examples in our data, still only managed to exceed their xG by 0.66 goals per game. This indicates that while individual match anomalies can occur due to factors like exceptional finishing or goalkeeping errors, over the course of a full season, teams' goal tallies tend to align more closely with their underlying xG metrics.

Another factor contributing to the unpredictable nature of single-game xG is the performance of goalkeepers. This is where expected goals on target (xGOT) becomes relevant, as it refines the xG model by considering the quality of on-target shots, including the shot's angle and placement within the goal, to estimate the likelihood of it resulting in a goal. In cases of significant overperformance, xGOT often shows a substantial increase over pre-shot xG, indicating superior finishing. For Coventry, their pre-shot xG was around 1.06, but xGOT only raised it to 3.00, implying that QPR's goalkeeper, Joe Walsh, should have saved four more goals. While his performance might not have been perfect, this assessment seems overly harsh given the difficulty of many of the goals conceded.

Ultimately, it's undeniable that xG, like any statistical model in a complex sport, is not without its imperfections. While it effectively estimates the quality of scoring opportunities, no model can perfectly account for every minute detail influencing a shot's outcome. Instead, xG serves as a valuable tool for identifying long-term trends, where over many shots, the average performance tends to align with the model's predictions. Coventry's significant overperformance was primarily a result of unusually precise and effective finishing, although the model may have slightly underestimated the true value of some of their opportunities. Nevertheless, the extreme rarity of such an overperformance strongly suggests that this clinical display was an extraordinary outlier rather than an indication of a flawed analytical framework.