As businesses transition from experimenting with generative AI in limited prototypes to full-scale production, a significant shift in mindset is occurring - a growing emphasis on cost consciousness. After all, using large language models comes with a price tag. Two key strategies to reduce costs have emerged: caching and intelligent prompt routing. AWS, at its re:invent conference in Las Vegas, has announced these features for its Bedrock LLM hosting service.

Unlock Cost Savings with AWS Bedrock's Innovative Features

Caching Service - Reducing Costs and Latency

Imagine a scenario where there is a crucial document, and numerous people keep asking questions about it. Each time, there is a cost associated with the model processing these queries. With caching, this repetitive work is avoided. As Atul Deo, the director of product for Bedrock, explains, "Every single time you're paying." But caching ensures that the model doesn't have to reprocess the same (or substantially similar) queries over and over again. According to AWS, this can lead to a remarkable cost reduction of up to 90%. Moreover, the latency for getting answers back from the model is significantly lowered, by up to 85% in some cases. Adobe, which tested prompt caching for its generative AI applications on Bedrock, witnessed a 72% decrease in response time. This shows the practical benefits of caching in real-world scenarios.Intelligent Prompt Routing - Balancing Performance and Cost

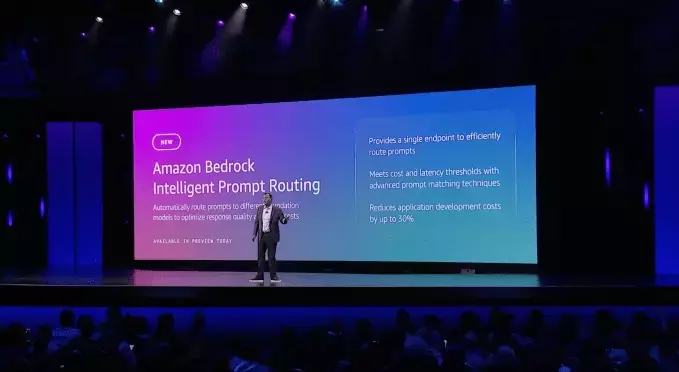

Sometimes, our queries can be quite simple. Do we really need to send such queries to the most powerful and expensive model? Probably not. With intelligent prompt routing for Bedrock, the system automatically predicts how each model in the same family will perform for a given query and routes the request accordingly. This helps businesses strike the right balance between performance and cost. As Deo explains, "At run time, based on the incoming prompt, send the right query to the right model." While LLM routing is not a new concept, AWS claims that what sets its offering apart is its ability to intelligently direct queries without extensive human input. However, it is limited to routing queries within the same model family for now. In the long run, the team plans to expand this system and provide users with more customization options.New Bedrock Marketplace - Supporting Specialized Models

While Amazon is partnering with major model providers, there are now hundreds of specialized models with only a few dedicated users. AWS is addressing this by launching a new marketplace for Bedrock. Deo explains that customers are asking the company to support these models, and now they have a dedicated marketplace. In this marketplace, users will have to manage and provision the capacity of their infrastructure themselves, which is typically handled automatically by Bedrock. AWS will offer about 100 of these emerging and specialized models initially, with more to come in the future. This provides businesses with more options and flexibility in choosing the right models for their specific needs.You May Like