New research indicates that a substantial open-source artificial intelligence training dataset inadvertently includes vast quantities of personal information, highlighting significant privacy vulnerabilities inherent in large-scale data collection. This discovery necessitates a reevaluation of how AI models are trained and the safeguards in place to protect individual data. The findings underscore the critical need for more robust policies and technological solutions to manage and mitigate privacy risks associated with AI development, prompting an urgent call for greater accountability within the machine learning community.

This situation also brings to the forefront the complex interplay between technological advancement, ethical data handling, and legal frameworks. The pervasive nature of web scraping means that individuals' online contributions can be repurposed in ways never intended, challenging traditional notions of consent and public versus private information. As AI systems become more integrated into daily life, addressing these foundational privacy issues is paramount to building public trust and ensuring responsible innovation.

Extensive Exposure of Sensitive Personal Information

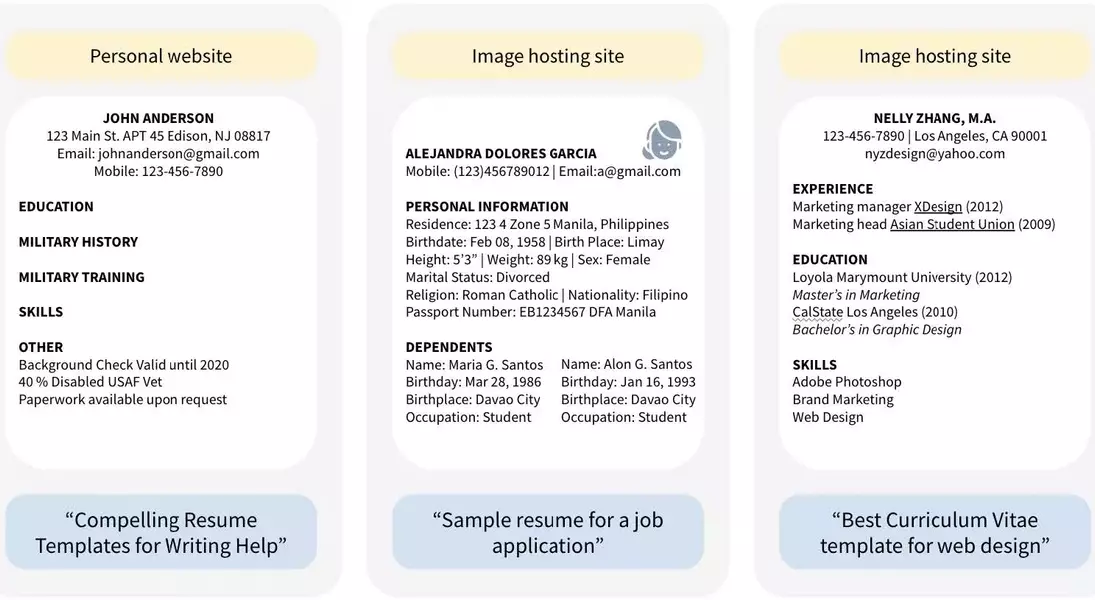

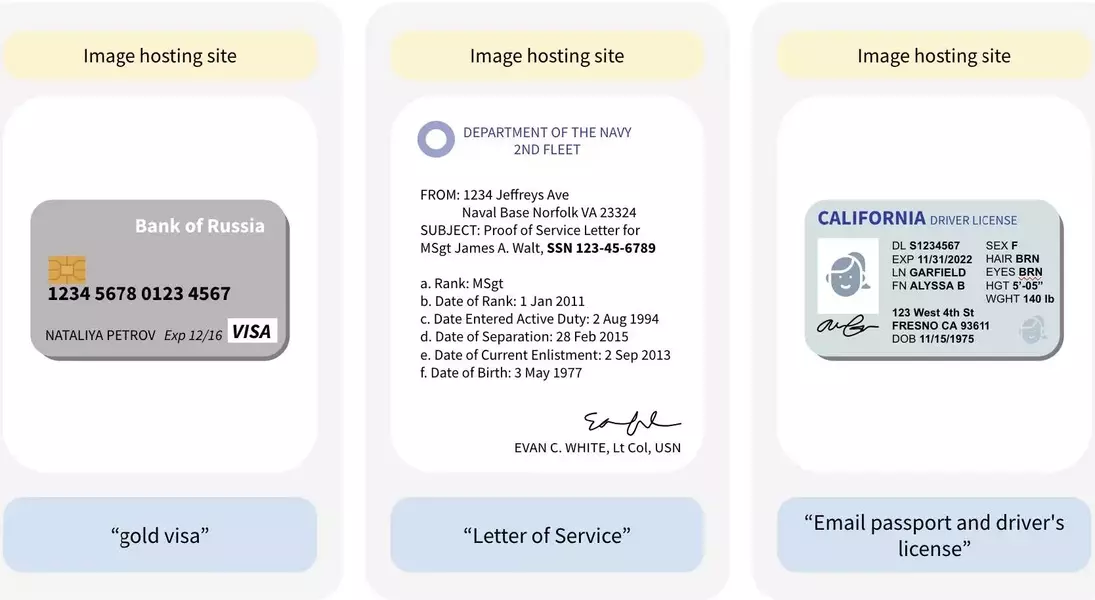

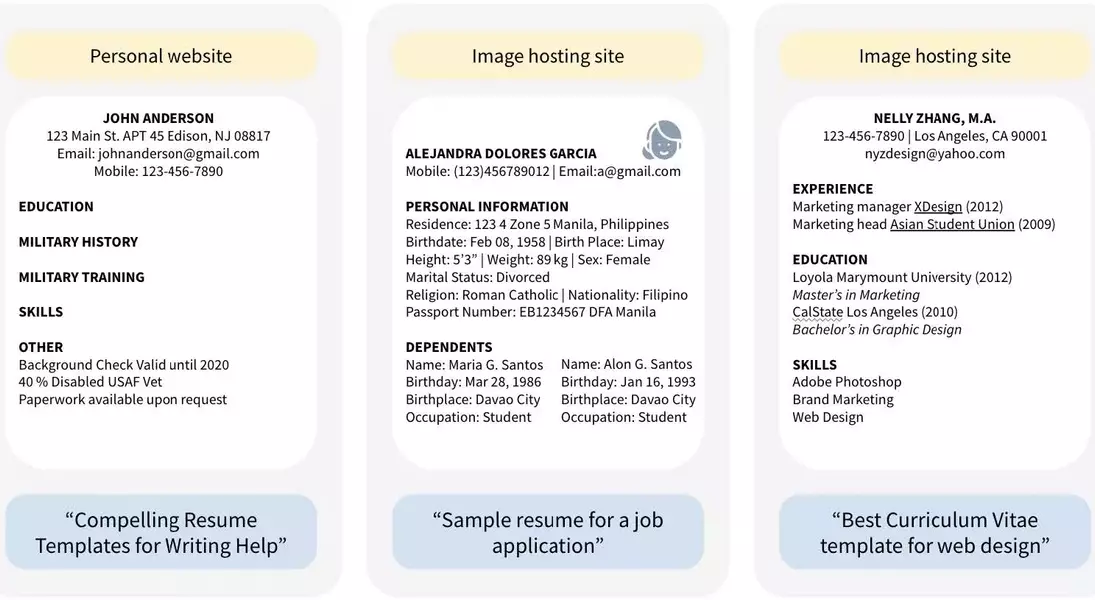

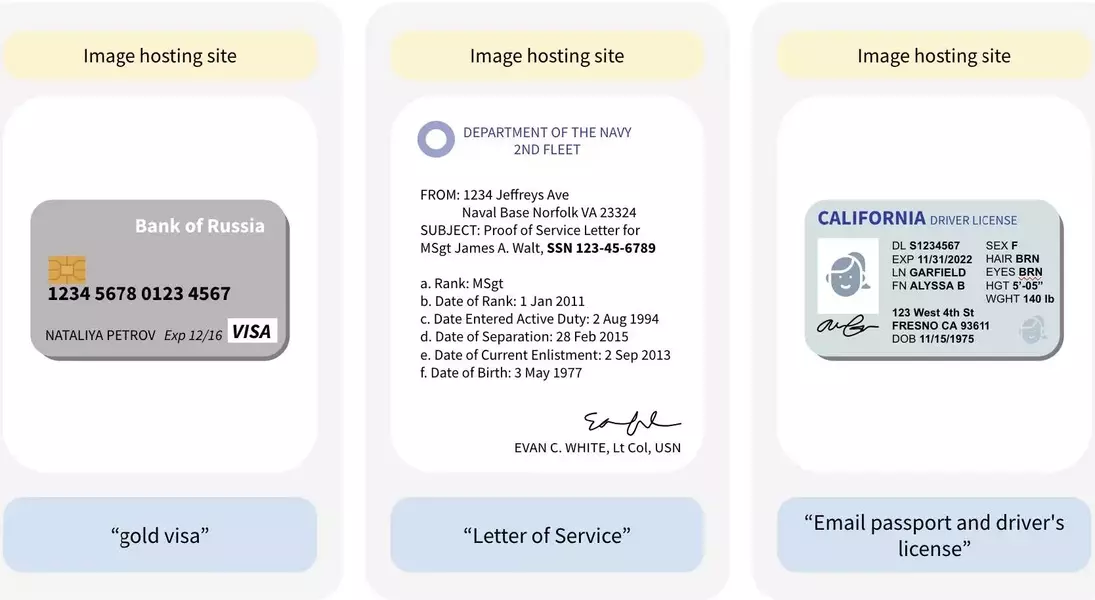

A recent study has unveiled a concerning truth: one of the largest publicly available AI training datasets, DataComp CommonPool, likely contains hundreds of millions of images featuring personally identifiable information. This includes highly sensitive documents such as passports, credit cards, and birth certificates, along with job applications detailing private aspects like disability status and background checks. This widespread exposure stems from the dataset's origins in extensive web scraping, collecting data that individuals may have initially shared with a limited audience or purpose, but which has now been aggregated for AI training.

The researchers, who examined only a fraction of CommonPool's immense data, conservatively estimate the true scale of private data inclusion to be much larger. This means that generative AI models trained on such datasets could inadvertently replicate or expose this sensitive information, posing substantial risks to individual privacy and security. The problem extends beyond CommonPool, as similar data collection methods are used for other major datasets, suggesting a systemic issue across the AI development landscape. This revelation challenges the prevailing assumption that publicly accessible online data is inherently fair game for AI training, prompting a crucial discussion on digital consent and data provenance.

Navigating the Labyrinth of Privacy Protections in AI

The discovery of pervasive personal data within AI training sets like CommonPool exposes critical gaps in current privacy protection mechanisms and legal frameworks. While data curators made some attempts to anonymize information, such as blurring faces, these measures proved insufficient, with millions of instances of unblurred faces and identifiable text remaining. This highlights the inherent difficulty in effectively filtering massive, web-scraped datasets for sensitive information, emphasizing that current technological solutions fall short of providing comprehensive privacy safeguards. Furthermore, the voluntary nature of certain privacy tools, where individuals must actively seek out and request removal of their data, places an undue burden on the public, who are often unaware their information has been collected.

The legal landscape surrounding AI data privacy is equally complex and fragmented. Existing regulations, like GDPR or CCPA, may not fully apply to research-oriented datasets or contain broad exemptions for "publicly available" information, a term whose interpretation is increasingly challenged by the nature of AI data collection. The fundamental issue lies in the historical understanding of public data versus its contemporary use in AI: information once shared with limited intent is now being absorbed into systems that can generate new content, blurring the lines of consent and control. This necessitates a profound rethinking of privacy principles in the digital age, urging policymakers and the AI community to collaborate on establishing more robust and adaptive frameworks that truly protect individual rights in an era of ubiquitous AI.