Adobe is transforming the landscape of digital content creation with its latest suite of generative artificial intelligence tools. These innovations are set to empower filmmakers and creators by simplifying complex audio and visual production processes, making advanced capabilities more accessible than ever before. The core of this advancement lies in the ability to convert simple human sounds into sophisticated audio effects and to guide video generation with unprecedented precision.

Details of the Advanced AI Tools

On a significant day in July 2025, Adobe introduced its new AI-driven filmmaking features, primarily focusing on sound effect generation and enhanced video control. The highly anticipated 'Generate Sound Effects' tool, now available in beta on the Adobe Firefly application, allows users to record their own onomatopoeic sounds, such as a "clip-clop" for a horse's trot, and combine them with textual descriptions to produce authentic sound effects. This feature is seamlessly integrated into a video editing timeline, enabling creators to synchronize custom audio with visual elements with remarkable ease. For instance, a video depicting a horse moving could be perfectly complemented by user-generated hoof sounds on concrete, offering four distinct audio options for selection. This capability expands beyond basic animal sounds to include a wide array of effects like the snap of twigs, the rhythm of footsteps, the whir of zippers, and diverse environmental ambiances, though speech generation remains outside its current scope. This cutting-edge tool evolves from the 'Project Super Sonic' experiment, which Adobe first unveiled at its Max event in October.

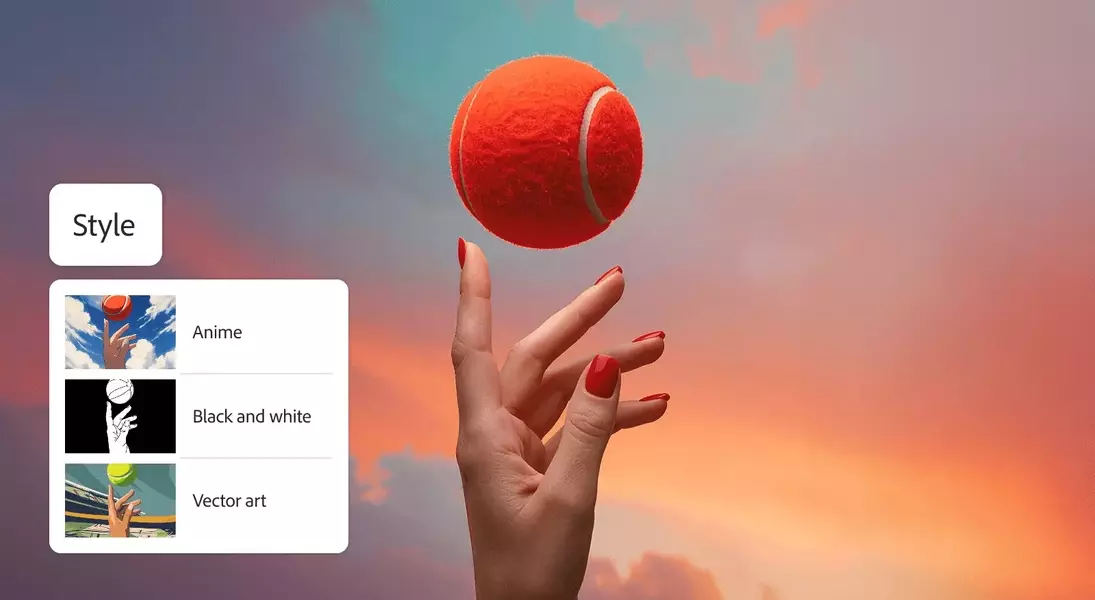

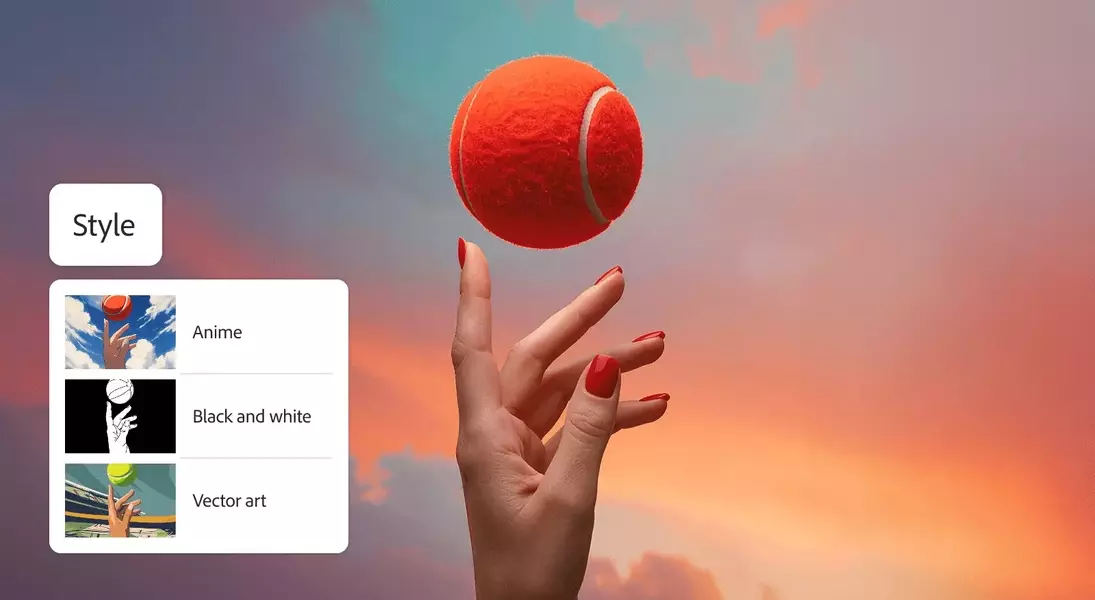

Furthermore, Adobe has upgraded its Firefly Text-to-Video generator with sophisticated new controls. The 'Composition Reference' function enables users to upload an existing video to serve as a structural template, guiding the AI to replicate its composition in the newly generated content. This significantly enhances the precision of video output, reducing the need for extensive text-based descriptions. Additionally, 'Keyframe Cropping' provides the ability to define the beginning and end points of a video using still images, allowing Firefly to interpolate the content in between. A variety of new style presets, ranging from anime and vector art to claymation, are also on offer, providing diverse aesthetic options for creators. While some of these presets, like the claymation style, may still require refinement as observed in live demonstrations, Adobe’s commitment to integrating third-party AI models signifies a future where even more varied and advanced functionalities will become available to users. This strategic move aims to maintain Adobe's leading position in the creative software market amidst the rapid evolution of generative AI technologies.

From a journalist's perspective, Adobe's latest AI innovations signal a paradigm shift in content creation. The ability to transform rudimentary human sounds into professional-grade audio effects is not merely a convenience; it's a democratization of sound design, making high-quality production accessible to a broader audience. Similarly, the enhanced video generation controls promise to unlock new levels of creative freedom and efficiency. However, the mention of certain presets still needing refinement underscores the nascent stage of some AI applications. It suggests that while AI is rapidly advancing, the human touch, particularly in nuanced artistic expression, remains irreplaceable. This balance between artificial intelligence and human ingenuity will undoubtedly continue to shape the future of digital art and media, urging creators to adapt and explore these powerful new tools while retaining their unique artistic vision.